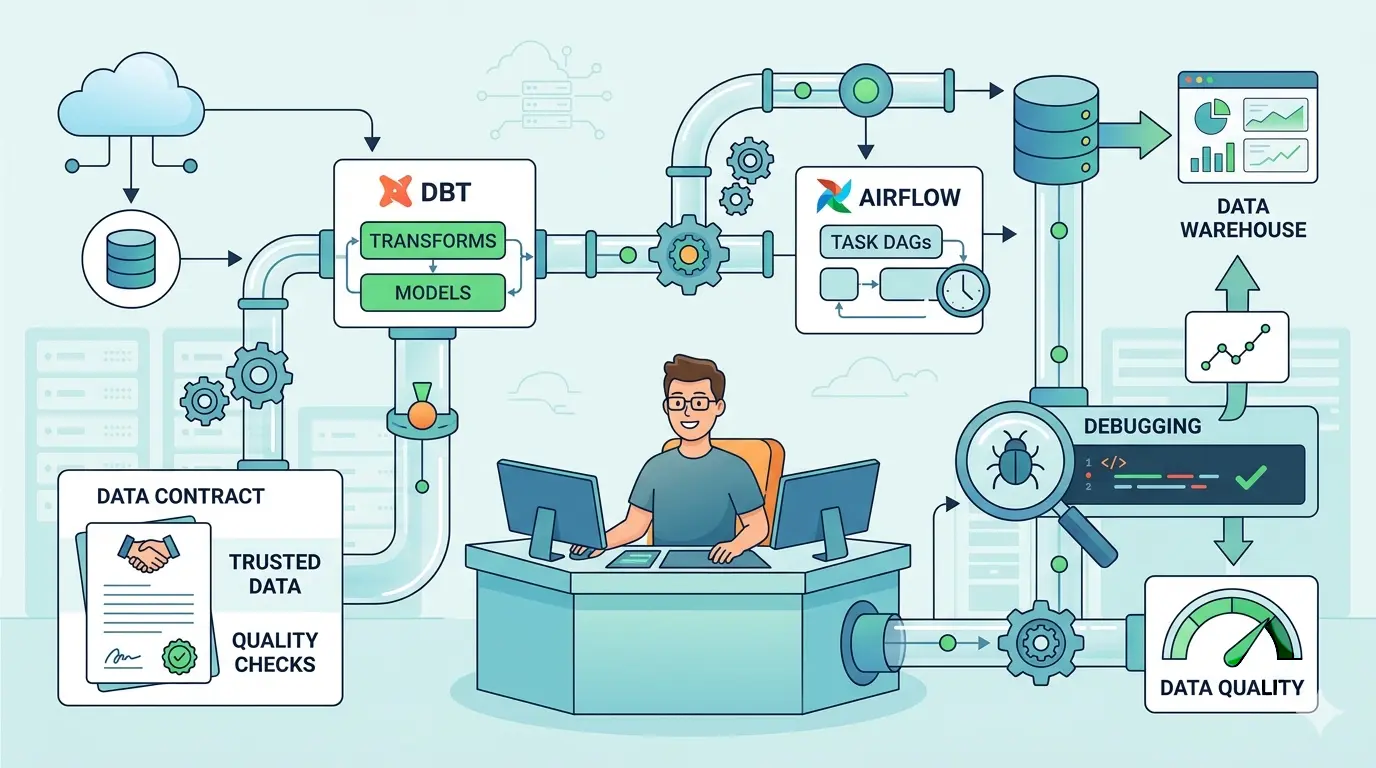

Data engineers rarely get blocked by SQL syntax alone. The real slowdown comes from unclear model grain, noisy upstream changes, brittle DAGs, bad handoffs between producers and consumers, and incident threads that never quite explain what failed. ChatGPT, Gemini, Claude, and DeepSeek can all help, but only when the prompt gives them real warehouse context, operational constraints, and a concrete success condition.

These prompts are optimized as a universal foundation for data engineers working across dbt projects, Airflow orchestration, ETL debugging, and contract-driven data delivery. The wording transfers well across ChatGPT, Gemini, Claude, and DeepSeek, while each model has different strengths depending on whether you need flexible drafting, careful reasoning, broad context synthesis, or a more technical breakdown. If you want more adjacent role-specific workflows, TipTinker’s Prompts archive is the broad hub.

Turn a Raw Source Feed Into a Clean dbt Model Plan

Model Recommendation: Claude is often the better fit when you need a structured model plan, explicit assumptions, and clean separation between staging, intermediate, and mart logic.

You are a senior analytics engineer helping me design a dbt model.

Context:

- Warehouse: {Snowflake | BigQuery | Redshift | Databricks | Postgres}

- Raw source tables: {table names}

- Target model purpose: {business use case}

- Expected grain: {one row per ...}

- Required transformations: {casts, joins, filters, business rules}

- Known source issues: {nulls, duplicates, late-arriving data, schema drift}

- Downstream consumers: {BI dashboard, ML feature table, reverse ETL, API}

Task:

1. Define the correct model grain and unique key.

2. Recommend which logic belongs in staging, intermediate, and mart layers.

3. Identify risky joins, ambiguous columns, and naming problems.

4. Propose derived fields, data type fixes, and freshness assumptions.

5. Return:

- a model plan table

- a SQL skeleton for the core model

- a dbt YAML skeleton with tests

- a short list of open questions before implementation

Be explicit about assumptions and do not hide uncertainty.

The Payoff: This prompt turns vague requirements into a model blueprint you can review before writing production SQL. It is especially useful when stakeholders know the business goal but have not yet defined grain, ownership, or test coverage.

Refactor a Messy dbt Model Into Maintainable Layers

Model Recommendation: Claude works well for this kind of refactor because it stays disciplined about structure, naming, and responsibility boundaries.

Act as a staff analytics engineer reviewing a dbt model that has become too large.

I will give you:

- the current SQL

- the current schema.yml entries

- the intended business output

Your job:

1. Identify which logic should move into staging, intermediate, or mart models.

2. Flag repeated expressions, hidden business logic, and fragile joins.

3. Suggest clearer model names, column names, and folder placement.

4. Preserve behavior unless you explicitly mark a change as a semantic correction.

5. Return:

- a proposed refactor plan in order

- the new model breakdown

- what tests should move with each model

- migration risks for downstream references

Optimize for maintainability, debuggability, and reviewability rather than clever SQL.

The Payoff: Instead of asking an AI model to rewrite a large file blindly, you get a safer refactor sequence with clear boundaries. That makes code review easier and lowers the chance of breaking downstream dashboards or metrics.

Generate dbt Tests That Match Real Failure Modes

Model Recommendation: DeepSeek is useful when you want a more systematic breakdown of edge cases, null behavior, uniqueness rules, and dependency risks.

You are reviewing a dbt model and designing high-value tests.

Context:

- Model name: {model}

- Grain: {grain}

- Important columns: {columns and meanings}

- Business-critical assumptions: {assumptions}

- Upstream dependencies: {sources/models}

- Known failure history: {duplicates, stale records, bad enum values, unexpected nulls}

Task:

1. Recommend generic tests, singular tests, and source tests.

2. Separate must-have tests from nice-to-have tests.

3. For each test, explain the exact failure mode it protects against.

4. Suggest severity tags or alert priority.

5. Return example YAML plus any custom SQL tests I should add.

Do not list generic tests without tying them to a concrete business or pipeline risk.

The Payoff: Good dbt tests are not a checklist. They are a map of how the model can fail in production. This prompt helps you move from generic uniqueness and not-null defaults to tests that actually reflect incident history.

Tune a Slow Incremental Model Before It Burns Warehouse Spend

Model Recommendation: DeepSeek is often the better fit for technical decomposition when the problem includes SQL patterns, partition logic, merge strategy, and performance tradeoffs.

Act as a senior data engineer optimizing a slow dbt incremental model.

I will provide:

- the current model SQL

- the materialization settings

- warehouse type

- row counts or rough table size

- known slow sections from query history or EXPLAIN output

Analyze the model and return:

1. the likely performance bottlenecks

2. whether the incremental strategy is appropriate

3. partitioning, clustering, sorting, or pruning ideas

4. joins or CTEs that should be rewritten

5. whether snapshots, pre-aggregation, or intermediate models would help

6. a revised implementation plan ordered by impact and risk

Optimize for correctness first, then cost, then speed. Flag any tradeoff that could change semantics.

The Payoff: This is useful when the model still “works” but has become expensive, slow, or operationally fragile. It gives you a practical optimization sequence instead of random SQL tweaks.

Diagnose a Broken Airflow DAG From Logs and Task State

Model Recommendation: Gemini works well when you need to synthesize multiple logs, task states, config snippets, and scheduler details in one pass.

You are an Airflow production incident analyst.

I will provide:

- DAG code or the relevant task definitions

- task logs

- scheduler or worker error messages

- retry settings

- upstream/downstream dependencies

- any recent deployment or config changes

Task:

1. Summarize the failure in plain English.

2. Identify the most likely root cause and 2 plausible alternatives.

3. Separate orchestration failure from code failure and data failure.

4. Recommend the fastest safe triage steps.

5. Recommend the durable fix.

6. Return a short runbook entry I can paste into incident notes.

Do not give generic Airflow advice. Tie every recommendation to the evidence in the logs.

The Payoff: This prompt is strongest when the failure signal is scattered across scheduler logs, retries, operator config, and task output. Teams that blur the line between orchestration and platform automation can also borrow ideas from DevOps engineers’ AI prompts for CI/CD and SRE workflows.

Debug ETL Row Mismatches, Null Explosions, and Duplicate Loads

Model Recommendation: DeepSeek is useful here because ETL debugging usually needs a disciplined hypothesis tree rather than a loose brainstorm.

Act as a data engineer debugging an ETL discrepancy.

Problem:

- Source row count: {count or estimate}

- Destination row count: {count or estimate}

- Expected business grain: {grain}

- Symptoms: {duplicates, missing records, null spike, late-arriving updates, bad joins}

Context:

- Source system: {system}

- Extraction method: {batch, CDC, API pull, file ingest}

- Transform layer: {SQL, Spark, dbt, Python}

- Load strategy: {append, merge, overwrite, upsert}

- Recent changes: {schema, logic, scheduler, source behavior}

Task:

1. Build a ranked hypothesis list.

2. Give a step-by-step debugging checklist.

3. Show which counts, filters, and join validations to run first.

4. Identify where idempotency may be broken.

5. Return a compact SQL or pseudocode validation plan.

Keep the order practical for an on-call engineer under time pressure.

The Payoff: Instead of poking at the pipeline from five directions at once, you get a triage order that narrows the fault domain quickly. That matters when a mismatch could be coming from extraction logic, merge logic, or silent schema changes.

Assess the Blast Radius of Schema Drift Before It Breaks Downstream Models

Model Recommendation: Gemini is often the better fit when you want to compare producer changes, model lineage, tests, and consumer expectations across several documents or snippets.

You are helping me analyze schema drift in a data platform.

I will provide:

- old schema

- new schema

- impacted dbt models or transformation code

- known downstream tables, dashboards, or services

- any existing data contract notes

Task:

1. Identify breaking changes, soft changes, and cosmetic changes.

2. Explain which downstream assets are likely impacted and why.

3. Flag type changes, enum expansion, nullability changes, and renamed columns.

4. Recommend immediate mitigation, short-term compatibility steps, and long-term prevention.

5. Return a blast-radius summary table plus an action plan by owner.

Prioritize operational impact and backward compatibility.

The Payoff: This prompt helps you turn a vague “the schema changed” warning into an owner-by-owner action list. It is especially useful when the producer sees the change as minor but downstream models, dashboards, or feature pipelines do not.

Draft or Review a Data Contract That Engineers Will Actually Use

Model Recommendation: Claude works well when the task needs precise wording, explicit guarantees, and careful separation between producer promises and consumer assumptions.

Act as a data platform architect helping me write a practical data contract.

Context:

- Dataset or event name: {name}

- Producer team: {team}

- Consumer teams: {teams}

- Business purpose: {purpose}

- Required fields: {fields}

- SLA or freshness needs: {requirements}

- Expected grain and keys: {grain}

- Known edge cases: {late data, nulls, enum expansion, deletions, backfills}

Task:

1. Draft a concise data contract.

2. Separate hard guarantees from non-guarantees.

3. Define schema expectations, freshness, quality checks, ownership, and change-management rules.

4. Add a short section for backward-compatibility policy.

5. Return the result in a format suitable for engineering docs.

Write for engineers and analytics consumers, not for legal review.

The Payoff: A useful data contract reduces surprise more than it reduces writing. It also creates cleaner handoffs to analytics consumers working through AI prompts for SQL, Tableau, and Power BI tasks because producer guarantees are spelled out before dashboards or semantic models depend on them.

Plan a Safe Historical Backfill Without Replaying Old Mistakes

Model Recommendation: DeepSeek is a strong fit when a backfill involves risk ordering, idempotency checks, partition logic, and operational safeguards.

You are helping me plan a production backfill.

Context:

- Pipeline or model: {name}

- Time range to backfill: {range}

- Current load method: {append, merge, snapshot, overwrite}

- Warehouse or compute engine: {engine}

- Data volume estimate: {estimate}

- Downstream dependencies: {dependencies}

- Constraints: {cost limit, time window, no dashboard disruption, retry limits}

Task:

1. Identify the key risks of this backfill.

2. Explain whether the backfill should run in place, in shadow tables, or in partition batches.

3. Define validation checks before, during, and after replay.

4. Recommend rollback or containment steps.

5. Return a production-safe execution checklist.

Assume the audience is an engineer who must run this carefully, not a manager reading a summary.

The Payoff: Backfills fail when teams focus only on compute and forget consumer impact, duplicate risk, or partial replay behavior. This prompt helps you think through the operational shape of the recovery before you touch production data.

Write a Tight RCA and Stakeholder Update After a Pipeline Incident

Model Recommendation: ChatGPT works well for this kind of day-to-day drafting when you already know the facts and need a clean, readable summary fast.

You are helping me write a post-incident summary for a data pipeline failure.

I will provide:

- incident timeline

- affected datasets or dashboards

- root cause

- mitigation steps

- permanent fix

- any open follow-up items

Task:

1. Write a short stakeholder update for non-engineers.

2. Write a technical RCA section for engineers.

3. Clearly separate impact, root cause, mitigation, and prevention.

4. Avoid defensive language, vague wording, and filler.

5. End with action items and owners.

Keep the tone factual and concise. Do not hide uncertainty if some facts are still pending.

The Payoff: The engineering work is not finished when the DAG is green again. A clear RCA preserves operational memory, speeds up future triage, and prevents the same failure from being rediscovered under a different label.

Pro-Tip: Chain Prompts Instead of Stuffing Everything Into One Request

For data engineering, a single mega-prompt usually mixes too many jobs: context gathering, failure analysis, implementation planning, and communication. Split the workflow into passes. First ask the model to extract facts from logs, schemas, or manifests. Then ask it to rank hypotheses or identify risks. Only after that should you ask for SQL, YAML, DAG edits, or incident copy. If the context is getting large, estimate the payload first with the AI Token Calculator so you trim noise before the model trims something important for you.

The strongest AI workflow for data engineers is not one perfect prompt. It is the habit of giving the model the right logs, schema details, ownership boundaries, and validation criteria so every answer is easier to review, safer to ship, and easier to trust.