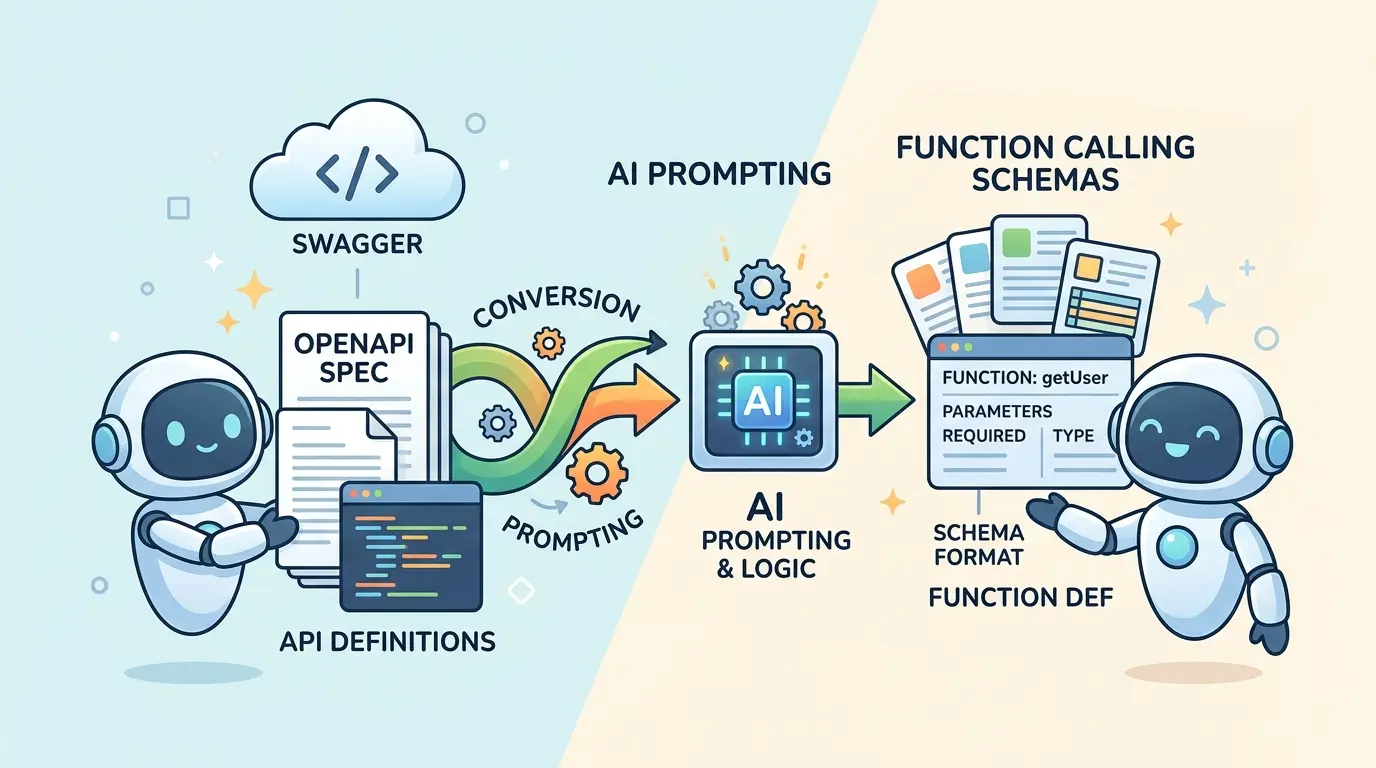

Most teams building AI agents do not get blocked by the model itself. They get blocked by the contract layer between a developer-facing API specification and a model-facing tool schema. A raw OpenAPI file is full of transport details, shared components, auth rules, response payloads, and internal parameters. A function-calling schema needs something tighter: a clean tool name, a short task description, and an input contract the model can fill without guessing.

Whether you use ChatGPT, Gemini, Claude, or DeepSeek, the workflow is largely the same: decide which operations should be exposed, flatten the request shape, remove server-side noise, and test the result against real agent tasks. The AI Prompts below are optimized as a universal foundation for AI engineers, backend developers, and agent builders who need reliable tool schemas instead of fragile one-shot conversions. Each model has different strengths, but the conversion discipline is portable.

Why Direct OpenAPI-To-Tool Conversion Breaks Down

OpenAPI was designed for client generation, documentation, and contract validation. Tool schemas for agents serve a different purpose: they help a model choose the right action and construct valid arguments under uncertainty.

That difference creates predictable failure modes:

- Deep

$reftrees hide required arguments behind reusable components. allOf,oneOf, and nullable branches create ambiguity that a model may resolve inconsistently.- Headers, auth fields, and server defaults get exposed even though the model should never control them.

- Operation names copied from HTTP semantics are often weak selectors for tool choice.

- Response schemas consume attention even though the model usually needs the input contract first.

If you want a faster starting point for the mechanical part of the workflow, TipTinker’s OpenAPI Spec to LLM Function Calling Schema Generator is useful. The prompts below are what help you turn that first pass into a schema an agent can actually use safely.

What A Good Function Calling Schema Should Keep

A strong agent-facing schema preserves only what improves tool selection and argument construction.

In practice, that usually means keeping:

- A task-oriented tool name that reflects user intent rather than HTTP plumbing

- A concise description that makes the scope of the tool obvious

- Only model-controllable inputs from path, query, and body fields

- Enums, constraints, and required fields that reduce invalid calls

- Examples and defaults when they genuinely help the model choose valid values

It usually means dropping or hiding:

- Auth headers and tokens

- Server-generated IDs unless the user truly provides them

- Transport metadata such as content negotiation details

- Response payload complexity unless your framework requires a response schema for post-call reasoning

- Internal admin or dangerous endpoints that should not be agent-accessible at all

Prompt 1: Select Only The Operations Worth Exposing

Model Recommendation: Gemini works well when you need to synthesize large or multi-file API specs and identify which operations map cleanly to user-facing agent tasks.

You are acting as an API-to-agent tooling architect.

I will provide an OpenAPI spec or selected endpoint excerpts.

Your job is to decide which operations should be exposed to an AI agent as callable tools.

For each operation, return:

1. HTTP method and path

2. Existing operationId

3. Keep or hide

4. Reason for that decision

5. Primary user intent the operation serves

6. Whether the operation is safe for autonomous use, confirmation-gated use, or human-only use

7. The ideal agent-facing tool name

8. The minimum user-supplied inputs required

Hide operations that are:

- internal maintenance

- auth refresh or credential exchange

- bulk destructive admin actions

- low-level helper endpoints that should remain behind orchestration code

Then return:

- a shortlist of recommended tools

- any missing orchestration wrappers I should build before exposing the API to an agent

Spec:

[PASTE OPENAPI SPEC OR SELECTED PATHS]

The Payoff: Most broken toolsets expose too many endpoints. This prompt forces a product decision before a schema decision, which keeps the agent surface smaller, safer, and easier for the model to search.

Prompt 2: Flatten $ref, allOf, oneOf, And Nullable Fields Into A Canonical Input Contract

Model Recommendation: DeepSeek is useful when you need structured decomposition across nested components, schema composition, and conflicting field rules.

You are converting an OpenAPI operation into a canonical agent input schema.

I will provide:

- the operation

- referenced component schemas

- parameter definitions

Your task is to flatten the request contract into one agent-facing input schema.

Rules:

- resolve `$ref` references

- merge `allOf` branches

- explain how to handle `oneOf` and `anyOf`

- represent nullable fields clearly

- preserve enums, min/max constraints, patterns, and required fields

- remove response-only or server-only fields

Return:

1. Canonical field list

2. Final JSON Schema for agent arguments

3. Required fields

4. Optional fields

5. Fields that should be hidden from the model

6. Ambiguities or branches that need wrapper logic instead of direct exposure

7. Notes on defaults and validation constraints

Operation:

[PASTE OPERATION]

Referenced components:

[PASTE COMPONENT SCHEMAS]

The Payoff: This gives you a clean intermediate contract instead of a brittle copy of the original spec. Once the canonical schema is correct, provider-specific function declarations become much easier.

Prompt 3: Rewrite Operation Names And Descriptions For Better Tool Selection

Model Recommendation: Claude is often the better fit when you need careful naming, scope wording, and structured descriptions that reduce tool confusion.

You are optimizing API operations for AI tool selection.

I will give you an operationId, summary, description, and endpoint behavior.

Rewrite them into an agent-facing tool contract.

Rules:

- use names based on user intent, not HTTP verbs alone

- make the scope obvious from the name

- avoid collisions with other tools

- avoid vague names such as manage, handle, process, data, item, or execute unless qualified

- keep the description short but specific

- mention the main action, object, and important limitation

Return:

1. Best tool name

2. Two alternate names

3. Final tool description

4. Why the chosen name is easier for a model to select correctly

5. Any naming collisions or ambiguity risks

Operation details:

[PASTE OPERATIONID, SUMMARY, DESCRIPTION, METHOD, PATH, AND PURPOSE]

The Payoff: Good naming reduces wrong-tool calls before validation even starts. A model can only select the correct function if the function looks semantically distinct from its neighbors.

Prompt 4: Separate User-Supplied Arguments From Hidden Runtime Context

Model Recommendation: ChatGPT is a strong day-to-day choice for operational schema cleanup and argument classification across many endpoints.

You are reviewing an API operation for agent-safe argument exposure.

Classify every input field into one of these buckets:

- user supplied

- derived from conversation context

- injected by application middleware

- server generated

- should never be model controlled

Return:

1. A table of all parameters and body fields with one classification each

2. The final set of model-controllable arguments

3. The runtime context my application should inject outside the model

4. Dangerous fields that should be hidden even if they exist in the OpenAPI spec

5. A cleaned function-calling schema using only model-controllable arguments

Operation definition:

[PASTE PARAMETERS, REQUEST BODY, AUTH DETAILS, AND EXAMPLE PAYLOAD]

The Payoff: This is where many agent integrations fail. The model does not need to control auth headers, tenant IDs, request signatures, or policy flags. Keeping those out of the tool contract removes an entire class of bad calls.

Prompt 5: Generate Provider-Ready Function Calling Schemas From The Canonical Contract

Model Recommendation: ChatGPT works well for repeatable transformation work when you need fast conversion from a neutral schema into tool definitions used across multiple agent runtimes.

You are converting a canonical agent input contract into function-calling schemas.

I will provide a normalized tool name, description, and canonical JSON Schema.

Return:

1. A provider-neutral function schema with name, description, and input schema

2. A strict JSON Schema version for runtime validation

3. Notes on any provider-specific limitations I should watch for

4. A compact example call with valid arguments

Requirements:

- keep descriptions crisp and task-focused

- preserve enums and required fields

- avoid unsupported constructs if a simpler equivalent is available

- prefer explicit nested objects over vague free-form maps when possible

- flag any areas where wrapper code is safer than direct schema exposure

Canonical contract:

[PASTE TOOL NAME, DESCRIPTION, AND CANONICAL JSON SCHEMA]

The Payoff: A canonical contract is your source of truth. This prompt turns it into deployable schemas without re-litigating business logic every time you switch SDKs or agent frameworks.

Prompt 6: Build Examples, Validation Cases, And Regression Fixtures

Model Recommendation: DeepSeek is often the better fit for structured test generation, edge-case coverage, and failure analysis.

You are generating validation assets for a function-calling schema.

Given the tool definition below, produce:

1. one happy-path example

2. three realistic variant examples

3. three invalid examples that should fail validation

4. the reason each invalid example should fail

5. edge cases involving missing required fields, enum violations, nullability, and nested-object mistakes

6. a compact regression checklist for future schema changes

Keep the examples realistic for an AI agent calling the tool during normal work.

Tool definition:

[PASTE FINAL FUNCTION SCHEMA]

The Payoff: Tool conversion is not finished when the schema looks clean. It is finished when the schema survives real and invalid examples without surprising your validator or your agent loop.

If you are storing large batches of examples or test fixtures, TipTinker’s JSONL Linter, Validator & LLM Dataset Formatter is a practical way to keep evaluation files consistent.

Prompt 7: Audit The Tool Surface For Overexposure And Injection Risk

Model Recommendation: Claude works well for security-focused review when you need careful reasoning about tool descriptions, free-form fields, and unsafe authority boundaries.

You are auditing an agent toolset for schema-level security risks.

Review the tools below and identify risks such as:

- destructive actions exposed without confirmation

- hidden admin or internal operations exposed to the model

- dangerous free-form string fields that could be abused

- URLs, HTML, markdown, or document text that may carry prompt injection into later steps

- sensitive identifiers that should be runtime-injected instead of model-controlled

- tool descriptions that are too vague and encourage misuse

Return:

1. Tool-by-tool risk level

2. Specific risky fields or descriptions

3. Which tools should require confirmation gates

4. Which tools should be hidden entirely

5. Schema hardening recommendations

6. A safer revised exposure policy

Tools:

[PASTE TOOL LIST WITH DESCRIPTIONS AND SCHEMAS]

The Payoff: Schema conversion is part of agent security. Once a tool can fetch webpages, read documents, or trigger state changes, a sloppy contract becomes an execution path, not just a formatting issue.

When your agent starts mixing tool calls with retrieved or untrusted text, What is Prompt Injection, and How to Protect Your AI from Malicious Users is a strong companion reference for hardening the surrounding workflow.

A Practical Conversion Pipeline That Holds Up

If you want a conversion process that stays stable as the spec grows, use this order:

- Inventory the API surface and expose only user-meaningful operations.

- Flatten the request contract into a canonical input schema.

- Hide runtime-only fields such as auth, tenant scoping, and server defaults.

- Rename the operation for tool selection, not for REST documentation.

- Emit provider-ready function schemas from the canonical contract.

- Validate with examples and invalid cases before wiring the tool into an agent loop.

- Run a security review on the final exposed toolset.

For broader API workflow thinking around endpoint design, contracts, and backend orchestration, API & Database Architecture: 10 AI Prompts for Backend Developers fits naturally alongside this conversion layer.

Pro-Tip

Do not ask one prompt to do the entire conversion in one shot. Chain the work: one prompt to choose the operations, one to flatten schema composition, one to separate hidden runtime context, one to generate the final function declaration, and one to build tests. Gemini is often the better fit for large spec synthesis, Claude for naming and policy wording, DeepSeek for structured schema decomposition, and ChatGPT for fast day-to-day transformation work.

Teams that treat OpenAPI-to-tool conversion as a repeatable contract-design problem build agents that call the right function more often, fail validation less often, and stay easier to evolve as the API surface changes.