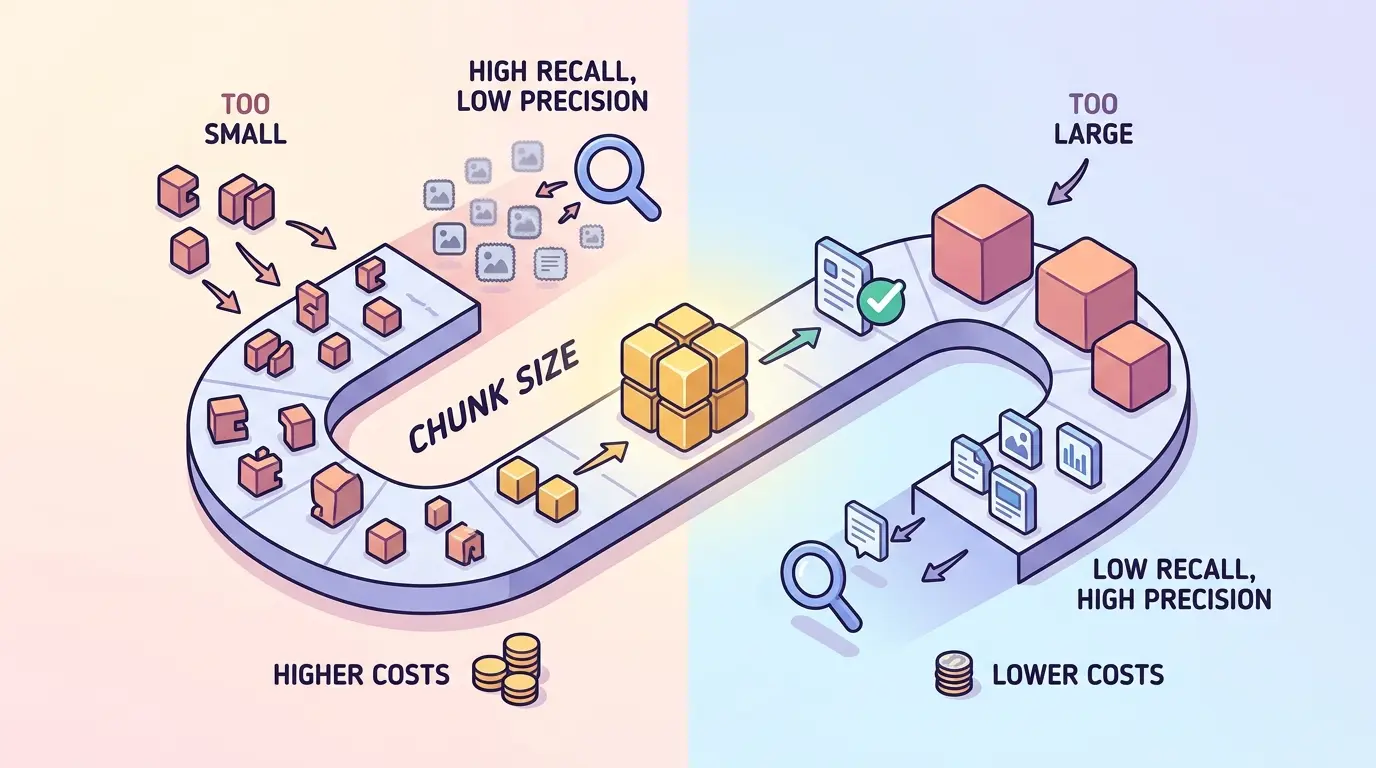

Most RAG systems do not fail because the embedding model is weak. They fail because the source material is split at the wrong granularity. If your chunks are too small, you fracture meaning, separate qualifiers from claims, and make recall depend on lucky adjacency. If they are too large, each retrieval hit carries too much unrelated text, which hurts precision and inflates prompt cost.

Whether you use ChatGPT, Gemini, Claude, or DeepSeek, the chunking workflow stays the same: identify the real answer unit, preserve semantic boundaries, test retrieval quality, and price the full pipeline. The AI Prompts below are optimized as a universal foundation for RAG engineers, search teams, and product builders who need better retrieval decisions without guesswork. Each model has different strengths, but the process is portable.

Why Chunk Size Is Not a Single Magic Number

Chunk size controls three things at once:

- Precision: smaller, tighter chunks usually embed a cleaner concept, which helps the retriever rank the right idea higher.

- Recall: larger chunks are more likely to keep the full answer unit intact, especially when meaning depends on nearby qualifiers, steps, or clauses.

- Cost: every choice changes index size, overlap duplication, reranker workload, and prompt-time token usage after retrieval.

If your team is still debating whether bigger context windows can replace retrieval discipline, The Context Window Trap: When to Choose RAG vs. Long-Context Models for Business Data is a useful framing reference. Long context can hide weak chunking for a while, but it rarely fixes it.

What Good Chunking Actually Preserves

A useful chunk is not defined by an arbitrary token count alone. It preserves the answer unit and, when needed, the citation unit. In practice, that often means keeping one procedure, one policy clause, one FAQ pair, one tightly related concept group, or one symbol-level code explanation together.

That is why the right chunk size often changes across the same corpus. A troubleshooting guide, API reference, meeting note, legal policy, and ticket archive do not have the same semantic boundaries. The prompts below help you make that decision with evidence instead of folklore.

Prompt 1: Audit the Corpus Before You Touch Chunk Size

Model Recommendation: Gemini works well when you need to synthesize representative documents, query logs, and document-shape patterns into one retrieval design view.

You are acting as a RAG retrieval architect.

I will provide representative documents from my corpus plus a sample of real user queries.

Your job is to determine the natural answer unit and the likely chunking risks for each document type.

For each document type, return:

1. Document type

2. Dominant structure (FAQ, narrative, spec, step-by-step, policy, code-adjacent, table-heavy, transcript, ticket, changelog)

3. Typical answer unit size

4. Typical citation unit size

5. Risks if chunks are too small

6. Risks if chunks are too large

7. Recommended initial chunk size range in tokens

8. Recommended overlap range in tokens

9. Whether parent-child retrieval would help

10. Metadata fields that should travel with each chunk

Then give:

- a ranked list of the 3 biggest chunking risks in this corpus

- the 2 to 4 chunking policies I should test first

Representative documents:

[PASTE 5 TO 10 DOCUMENT SAMPLES]

Representative user queries:

[PASTE 20 TO 50 REAL USER QUESTIONS]

The Payoff: This stops you from applying one token count across every file type. Most bad chunking starts with a false assumption that the corpus is more uniform than it really is.

Prompt 2: Match Chunk Size to Query Shape and Answer Span

Model Recommendation: Claude is often the better fit when you need careful reasoning about answer scope, ambiguity, and how user intent maps to retrieval granularity.

You are helping me map RAG query intent to chunking strategy.

Given a list of real user questions, classify each query into one of these answer patterns:

- single fact

- scoped definition

- multi-step procedure

- clause lookup

- comparison

- troubleshooting

- code lookup

- summarization request

- multi-hop synthesis

Return a table with these columns:

1. Query cluster

2. Typical answer span length

3. Best chunk behavior (tiny focused / medium semantic / larger sectional / parent-child)

4. Recommended chunk size range in tokens

5. Recommended overlap

6. Whether reranking is important

7. Failure mode if chunks are too small

8. Failure mode if chunks are too large

Then summarize:

- which query clusters are precision-sensitive

- which query clusters are recall-sensitive

- which clusters should not share the same chunking policy

Query sample:

[PASTE QUERY LOG SAMPLE]

The Payoff: Chunk size should follow the shape of the question, not the shape of the file. A system built for clause lookup will usually chunk differently than one built for long troubleshooting answers.

If you want to inspect how boundary choices change the fragments your retriever actually sees, TipTinker’s RAG Chunking Visualizer is a useful companion while working through the next prompt.

Prompt 3: Find Natural Semantic Boundaries Instead of Arbitrary Cuts

Model Recommendation: Claude works well for boundary analysis when meaning depends on headings, list nesting, clause references, tables, and neighboring explanation.

You are designing chunking rules for a RAG preprocessing pipeline.

Analyze the sample document and create split rules that preserve meaning.

Respect these structures when relevant:

- headings and subheadings

- question and answer pairs

- numbered procedures

- bullet lists with lead-in sentences

- tables with titles or notes

- code blocks with nearby explanation

- legal or policy clauses with cross-references

- figure references and captions

Return:

1. Preferred split points

2. Forbidden split points

3. What context should be copied into each child chunk

4. When sliding windows are justified

5. When parent-child retrieval is better than larger flat chunks

6. A pseudo-algorithm I can implement in a chunker

7. The biggest semantic-boundary mistakes this document type invites

Document:

[PASTE SAMPLE DOCUMENT]

The Payoff: Fixed token windows are easy to implement, but they often split the exact relationship the retriever needs. This prompt helps you preserve meaning before you start tuning numbers.

Prompt 4: Tune Overlap Without Paying for Duplication You Do Not Need

Model Recommendation: ChatGPT is a strong day-to-day choice for operational what-if analysis when you want quick overlap recommendations across multiple candidate chunk policies.

You are optimizing overlap for a RAG chunking pipeline.

Given the document types, candidate chunk sizes, and example boundary breaks, determine the minimum overlap that preserves continuity without unnecessary duplication.

Return:

1. Recommended overlap in tokens and percentage for each document type

2. What that overlap is preserving (subject carryover, pronoun resolution, step continuity, clause dependency, code context)

3. When zero overlap is safe

4. When larger overlap is justified

5. How overlap changes chunk count and duplicated token load

6. Which overlap settings are likely to hurt precision by repeating too much context

Inputs:

- document types: [PASTE]

- candidate chunk sizes: [PASTE]

- boundary examples: [PASTE]

The Payoff: Overlap is insurance, not free context. Too little overlap creates retrieval cliffs; too much overlap quietly multiplies storage, reranking work, and prompt waste.

Prompt 5: Build a Retrieval Evaluation Matrix Before Reindexing Everything

Model Recommendation: DeepSeek is useful when you need a structured comparison across chunking policies, metrics, and failure modes rather than a vague recommendation.

You are building a chunk-size evaluation plan for a RAG system.

I will provide candidate chunking policies and a benchmark set of user questions with expected answer sources.

Compare the policies and produce both an experiment plan and a decision table.

For each policy, score or estimate:

- hit@k

- answer containment

- citation cleanliness

- average irrelevant token load

- median retrieved tokens

- reranker burden

- storage multiplier

- latency risk

- failure risk for long-tail queries

Then recommend the best policy for:

1. precision-first systems

2. recall-first systems

3. cost-capped systems

Also return:

- the smallest benchmark set that would still discriminate between the policies

- the exact failure signatures I should inspect in logs

Candidate policies:

[PASTE CHUNK SIZES, OVERLAP VALUES, AND DOC-TYPE RULES]

Benchmark questions and source answers:

[PASTE GOLD QUERY SET]

The Payoff: This is how you stop tuning by vibes. A chunk policy should win because it retrieves cleaner answers under measured constraints, not because it sounds reasonable in a design meeting.

Prompt 6: Estimate Indexing and Prompt-Time Cost Before You Commit

Model Recommendation: ChatGPT works well for fast scenario planning when you want to pressure-test chunk counts, duplication, and context spend before changing the pipeline.

You are forecasting the operational cost of RAG chunking choices.

Using corpus size, average document length, chunk size, overlap, top-k, average turns per session, and monthly volume, estimate:

1. approximate number of chunks

2. duplication factor from overlap

3. average retrieved tokens per answer

4. embedding workload for full reindexing

5. prompt token consumption caused by retrieval context

6. the highest-leverage way to reduce cost without damaging retrieval quality too much

Return:

- formulas used

- assumptions made

- a side-by-side comparison of the candidate policies

- a recommendation for the cheapest safe policy

Inputs:

[PASTE CORPUS TOKENS, CHUNK POLICIES, TOP-K, SESSION VOLUME, AND RETRIEVAL SETTINGS]

The Payoff: Chunk size decisions look cheap until overlap, top-k, and session volume multiply the bill. For quick token arithmetic during this step, TipTinker’s AI Token Calculator is a practical companion.

Prompt 7: Create Document-Type Rules for Mixed Corpora

Model Recommendation: Gemini is often the better fit when the corpus spans manuals, policies, FAQs, tickets, and code-adjacent material that need separate treatment.

You are designing a chunking policy for a mixed RAG corpus.

My corpus contains these document types:

- FAQs

- API docs

- product manuals

- policies and contracts

- release notes

- support tickets

- chat transcripts

- code snippets with explanations

- PDFs with tables and captions

Create a document-type-specific chunking policy.

For each document type, return:

1. boundary unit to preserve

2. recommended chunk size range in tokens

3. recommended overlap

4. metadata fields to attach

5. whether parent-child retrieval or summary nodes should be used

6. whether reranking is especially important

7. ingestion warnings or edge cases

8. what not to do

Then return a short policy document I can hand to the engineering team.

The Payoff: Mixed corpora punish one-size-fits-all chunkers. This prompt gives you a policy that reflects how different source types carry meaning and how they fail under retrieval.

Prompt 8: Diagnose Precision and Recall Failures From Real Misses

Model Recommendation: DeepSeek works well for complex logic and root-cause analysis when you need to separate chunking defects from embedding, reranking, or filtering problems.

You are debugging a failed RAG retrieval result.

I will provide:

- the user query

- the expected answer or source section

- the retrieved chunks and their ranks

- nearby source text around the true answer

- metadata filters and retrieval settings

Determine whether the miss came from:

- chunk size too small

- chunk size too large

- bad semantic boundary split

- missing overlap

- metadata filtering

- embedding mismatch

- reranker failure

- top-k too low

- missing parent-child strategy

Return:

1. primary failure cause

2. supporting evidence

3. the smallest next experiment to run

4. what chunk policy change to test

5. how I should verify improvement

6. whether the issue is precision, recall, or cost related

Debug data:

[PASTE QUERY, EXPECTED ANSWER, RETRIEVED CHUNKS, RANKS, AND RETRIEVAL SETTINGS]

The Payoff: Real misses are better teachers than abstract chunking debates. This prompt helps you isolate whether the problem is granularity, boundary handling, or something outside chunking altogether.

A Practical Chunking Loop That Holds Up

If you want a repeatable way to tune chunk size without getting lost in theory, use this loop:

- Sample the corpus and query log instead of tuning against one favorite document.

- Define the answer unit and citation unit for each major document type.

- Draft multiple candidate policies instead of chasing one global token count.

- Measure retrieval quality on real questions with answer containment and irrelevant-token load.

- Inspect failure cases manually so you can see whether chunking or ranking is actually to blame.

- Lock a default plus exceptions for document types that clearly need different treatment.

That is the discipline that turns chunk size from a guess into an engineering control.

Pro-Tip

Chain these prompts instead of using only one. Start with the corpus audit, run the query-to-answer-span mapping, draft semantic boundary rules, then compare policies with the evaluation matrix. Use Gemini for broader corpus synthesis, Claude for boundary reasoning, DeepSeek for structured failure analysis, and ChatGPT for fast operational iteration and cost what-if work.

The best chunk size for RAG is not the biggest number your model can tolerate or the smallest number your vector store can hold. It is the smallest unit that keeps the answer intact, retrieves cleanly, and fits the economics of your system.