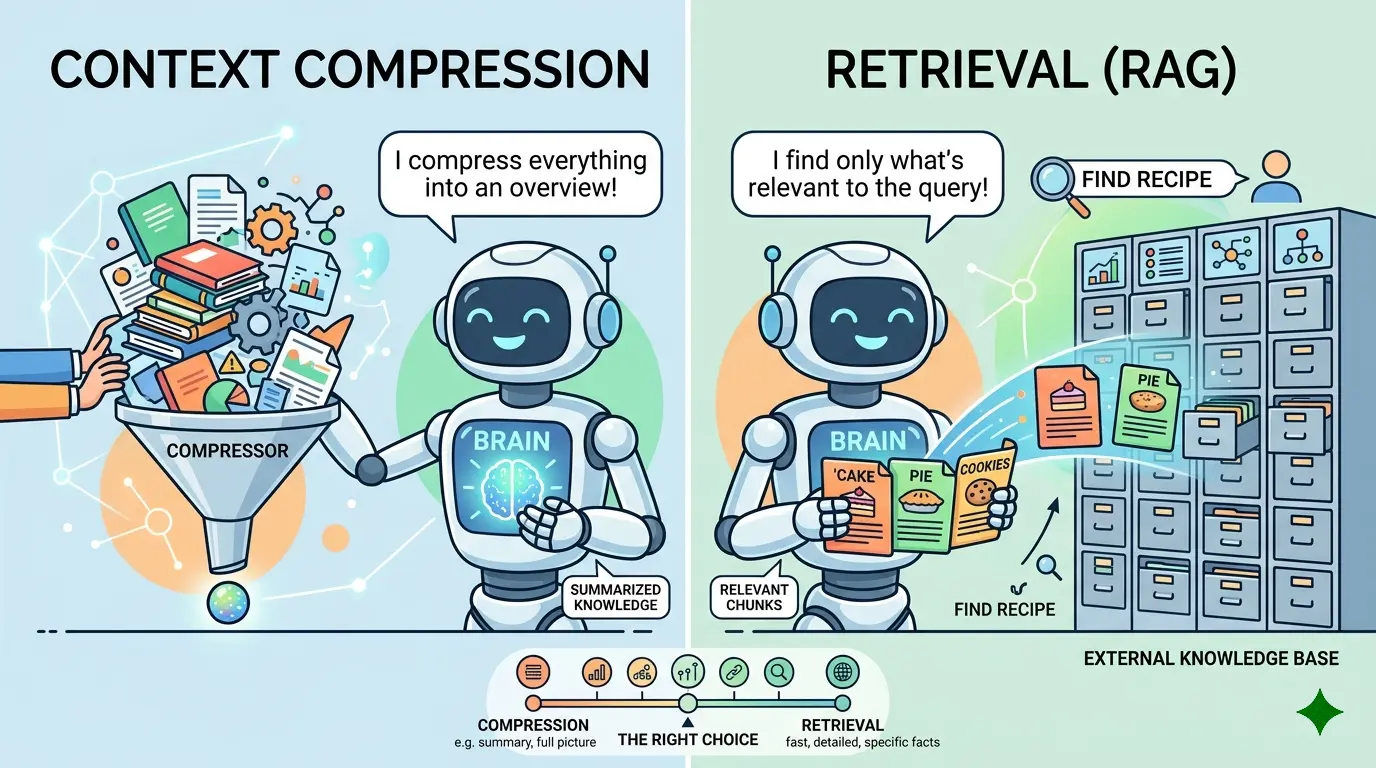

Most AI workflows do not fail because the model runs out of intelligence. They fail because the context layer is poorly managed. A team keeps stuffing old conversation into the prompt, the token budget swells, and the model starts reasoning over a blurry summary of everything. Or the team overcorrects, pushes everything into retrieval, and the system loses the stable working state it needed to stay coherent from one step to the next.

That bottleneck shows up in agent design, long-form analysis, customer support automation, research workflows, and multi-step content systems. ChatGPT, Gemini, Claude, and DeepSeek can all help with the decision, but they are useful in slightly different ways. The AI Prompts below are optimized as a universal foundation for AI engineers, product teams, and automation builders who need to decide when to compress context, when to retrieve fresh evidence, and when to combine both. ChatGPT works well for day-to-day iteration, Claude is often the better fit for careful compression rules and structured reasoning, Gemini is useful when broader document synthesis matters, and DeepSeek is strong at technical decomposition and routing logic.

If your team is still deciding whether retrieval belongs in the architecture at all, The Context Window Trap: When to Choose RAG vs. Long-Context Models for Business Data is a useful companion before you tune the memory layer itself.

Diagnose Whether the Problem Is Context Bloat or Missing Evidence

Model Recommendation: DeepSeek is often the better fit for separating memory problems from retrieval problems because it works well for structured analysis and system decomposition.

Act as an AI systems architect.

I need to decide whether my workflow problem should be solved with:

1. context compression

2. retrieval from source documents

3. a hybrid approach

Workflow description:

[DESCRIBE THE SYSTEM, USERS, TASKS, AND FAILURE SYMPTOMS]

Current prompt inputs:

[PASTE WHAT THE MODEL CURRENTLY RECEIVES]

Available source material outside the prompt:

[LIST DOCUMENTS, DATABASES, TOOLS, TICKETS, FILES, OR KNOWLEDGE BASES]

Observed failures:

[PASTE EXAMPLES OF HALLUCINATION, REPETITION, MISSED FACTS, STALE FACTS, TOKEN BLOAT, OR SLOW RESPONSES]

Classify the root cause of each failure as mostly caused by:

- too much stale context

- too little retained working state

- missing external evidence

- poor retrieval design

- mixed failure requiring compression plus retrieval

Then return:

- the dominant failure pattern

- which information should stay in working memory

- which information should be compressed

- which information should only be retrieved on demand

- the risks of over-compressing this workflow

- the risks of over-retrieving this workflow

- a final recommendation with rationale

Do not give generic advice. Base the answer on the actual workflow and failures.

The Payoff: Teams often argue about compression and retrieval as if they were competing ideologies. This prompt turns the choice into a failure analysis problem, which is usually the fastest way to see what actually belongs in working memory versus what belongs in a source-of-truth lookup.

Build A Compression Policy for Stable Working State

Model Recommendation: Claude is often the better fit when you need careful summarization rules, preservation of nuance, and clear boundaries around what a compressed state must keep.

You are designing a context compression policy for a multi-step AI workflow.

My workflow has long conversations, repeated instructions, and evolving task state.

I need a compression strategy that preserves:

- current goal

- constraints

- decisions already made

- unresolved questions

- user preferences

- in-progress outputs

But it must remove:

- repeated phrasing

- obsolete branches

- low-value chatter

- superseded decisions

Workflow details:

[DESCRIBE THE TASK FLOW]

Sample transcript or prompt history:

[PASTE LONG THREAD]

Create:

1. a compressed state template

2. fields that must always survive compression

3. fields that should expire automatically

4. signals that a prior decision has been superseded

5. confidence markers for uncertain summaries

6. a safe handoff summary under a strict token budget of [STATE BUDGET]

7. failure modes to watch for if the compression is too aggressive

Return the output as an operational policy, not a generic summary.

The Payoff: Compression is the right answer when the model still needs continuity, but not the full transcript. This prompt helps you preserve durable task state without dragging along every old turn, which is usually where latency and prompt drift start to stack up.

Decide What Must Never Be Compressed

Model Recommendation: Claude works well here because the decision depends on precision, exception handling, and careful treatment of high-risk source material.

Act as a reliability reviewer for an AI workflow.

I want to identify which information should never be stored only as compressed memory.

System context:

[DESCRIBE THE WORKFLOW]

Information types involved:

[LIST POLICIES, CONTRACTS, SPECS, TABLES, NUMBERS, SOURCE DOCS, TICKETS, CODE, CUSTOMER DATA, LEGAL TEXT, OR PROCEDURES]

For each information type, classify whether it should be:

- safe to compress

- safe to compress with citation

- retrieved from source every time

- retrieved when confidence is low

- stored only as metadata pointer, not summary

Then explain:

- what is lost if that information is compressed too early

- what kinds of errors compression could introduce

- which information requires exact quoting or exact values

- which information should always remain attached to a source reference

- a short governance rule for engineers implementing this system

The Payoff: Compression is dangerous when the workflow depends on exact values, contract language, policy wording, fresh operational state, or high-stakes instructions. This prompt draws that line early, before a lossy summary becomes the only thing the model can see.

Create a Retrieval Trigger Policy Instead of Retrieving Everything

Model Recommendation: DeepSeek is useful when the task requires routing rules, trigger conditions, and explicit decision logic.

You are designing retrieval trigger rules for an AI system.

I need retrieval to happen only when it is justified, not on every turn.

Workflow description:

[DESCRIBE USERS, TASKS, TOOLS, AND KNOWLEDGE SOURCES]

Current memory or compressed state:

[PASTE CURRENT MEMORY FORMAT OR SUMMARY TEMPLATE]

Available retrieval sources:

[LIST DOCS, DATABASES, KNOWLEDGE BASES, FILES, LOGS, OR SEARCH TOOLS]

Design a retrieval trigger policy that specifies:

- when the compressed state is enough

- when the model must retrieve external evidence

- when retrieval should be optional but recommended

- when stale memory should be overridden by fresh lookup

- when a user request requires citation or source-backed output

- when the system should ask for clarification before retrieval

- when retrieval is unnecessary overhead

Return:

1. trigger conditions

2. no-trigger conditions

3. freshness-sensitive cases

4. high-risk cases

5. a simple decision tree the orchestrator can use

The Payoff: Retrieval is most effective when it is treated as a conditional tool, not a reflex. This prompt gives teams a usable trigger policy so the system retrieves when evidence matters and stays lean when the current working state is already enough.

Design a Hybrid Memory Layer That Compresses State and Retrieves Evidence

Model Recommendation: Gemini is useful when you need to synthesize workflow notes, document sources, and orchestration rules into one architecture plan.

Act as a memory architecture designer for an AI workflow.

I want a hybrid design where:

- stable working state is compressed

- source-of-truth material is retrieved on demand

- the model can tell the difference between remembered context and verified evidence

System description:

[DESCRIBE THE SYSTEM]

Task sequence:

[DESCRIBE MULTI-STEP FLOW]

Source materials:

[DESCRIBE DOCUMENT STORES, APIS, OR DATABASES]

Create a hybrid design that includes:

- what belongs in compressed working memory

- what belongs in retrievable storage only

- how memory should point to sources without copying them

- when to refresh memory from retrieval results

- how to prevent stale retrieved facts from becoming permanent memory

- how to separate user preference memory from task-specific state

- a recommended prompt structure showing where compressed state and retrieved evidence should appear

Return the result as an implementation blueprint with explicit boundaries.

The Payoff: In production systems, the winning pattern is often not compression or retrieval alone. It is compressed state for continuity plus retrieval for verifiable evidence. This prompt makes that split explicit so the model stops treating summaries and source material as if they were the same thing.

If you are redesigning multi-step workflows around this idea, Prompt Engineering 3.0: The End of Prompting and the Rise of Flow Engineering is a useful parallel read because the real leverage usually comes from orchestration rules, not isolated prompt wording.

Build Retrieval Units the Model Can Actually Use

Model Recommendation: Gemini works well when chunking, metadata, and document structure need to be reasoned about across multiple examples.

Act as a retrieval index reviewer.

I have decided that some information should be retrieved instead of compressed.

Now I need to design retrieval units that preserve enough context for the model to answer correctly.

Document types:

[LIST DOC TYPES]

Sample documents or excerpts:

[PASTE EXAMPLES]

Expected user questions:

[PASTE QUERY EXAMPLES]

Current or planned metadata:

[LIST FIELDS]

Recommend:

- chunking strategy by document type

- when chunks should keep parent-child relationships

- which metadata should be used for filtering versus ranking

- what should stay out of embeddings and remain metadata-only

- how to preserve tables, lists, code, and section headings

- what chunking mistakes would make retrieval look worse than it really is

- a validation checklist before reindexing

Make the answer specific to the examples.

The Payoff: Many teams think retrieval failed when the real failure was bad chunk design. If the retrieval units destroy document meaning, the model will still miss the point even when the right file was technically found.

If you want to inspect chunk boundaries before you reindex, the RAG Chunking Visualizer is useful for seeing where context gets split too aggressively.

Compare Token Cost, Latency, and Risk Before Committing

Model Recommendation: ChatGPT works well for practical tradeoff exercises where the goal is to turn a technical design choice into a simple operating decision.

You are helping me compare context compression versus retrieval for a production AI workflow.

Workflow description:

[DESCRIBE THE SYSTEM]

Operational constraints:

- latency target: [STATE TARGET]

- cost sensitivity: [LOW/MEDIUM/HIGH]

- freshness requirement: [LOW/MEDIUM/HIGH]

- tolerance for approximation: [LOW/MEDIUM/HIGH]

- need for source-backed answers: [LOW/MEDIUM/HIGH]

Candidate strategies:

1. mostly compressed long-context memory

2. mostly retrieval-based evidence lookup

3. hybrid memory plus retrieval

For each strategy, estimate:

- likely token cost behavior

- likely latency behavior

- main accuracy risk

- main freshness risk

- implementation complexity

- observability needs

- best-fit use cases

- worst-fit use cases

Finish with a clear recommendation and the single strongest reason for choosing it.

The Payoff: The right answer is often constrained less by model capability and more by budget, freshness, and error tolerance. This prompt helps translate architecture debates into operational tradeoffs that a team can actually act on.

If you need rough prompt-budget estimates while making that call, the AI Token Calculator is a practical companion.

Turn Context Failures Into Regression Tests

Model Recommendation: DeepSeek is often the better fit for converting messy incidents into testable failure patterns and reusable evaluation cases.

You are building a regression suite for context-management failures in an AI workflow.

I will provide examples of bad outputs caused by:

- over-compression

- stale memory

- missing retrieval

- irrelevant retrieval

- mixed compression and retrieval failures

Failure examples:

[PASTE INCIDENTS, BAD ANSWERS, TOOL TRACES, OR REVIEW NOTES]

For each failure, create:

1. test ID

2. failure category

3. scenario setup

4. expected good behavior

5. the specific mistake to prevent

6. whether the fix should be compression, retrieval, or hybrid logic

7. severity if it regresses

8. tags for grouping similar failures

Then return:

- a minimal smoke-test pack

- a high-risk pack

- a freshness-sensitive pack

- a source-grounding pack

- a checklist for reviewers deciding whether the system chose the right memory strategy

The Payoff: Compression-versus-retrieval decisions improve fastest when failures become repeatable tests instead of one-off anecdotes. This prompt helps teams score whether the system chose the right context strategy, not just whether the final wording looked plausible.

Pro-Tip: Compress the State, Retrieve the Proof

The cleanest pattern in many AI systems is simple: keep a compressed record of the goal, constraints, decisions, and open loops, then retrieve the facts, documents, and fresh operational evidence only when the answer depends on them. That keeps the model coherent without forcing it to trust a summary where exact source material is the safer choice.

The strongest teams do not treat context as a dumping ground. They design it as a system: compression for continuity, retrieval for truth, and explicit rules for when each one takes control.