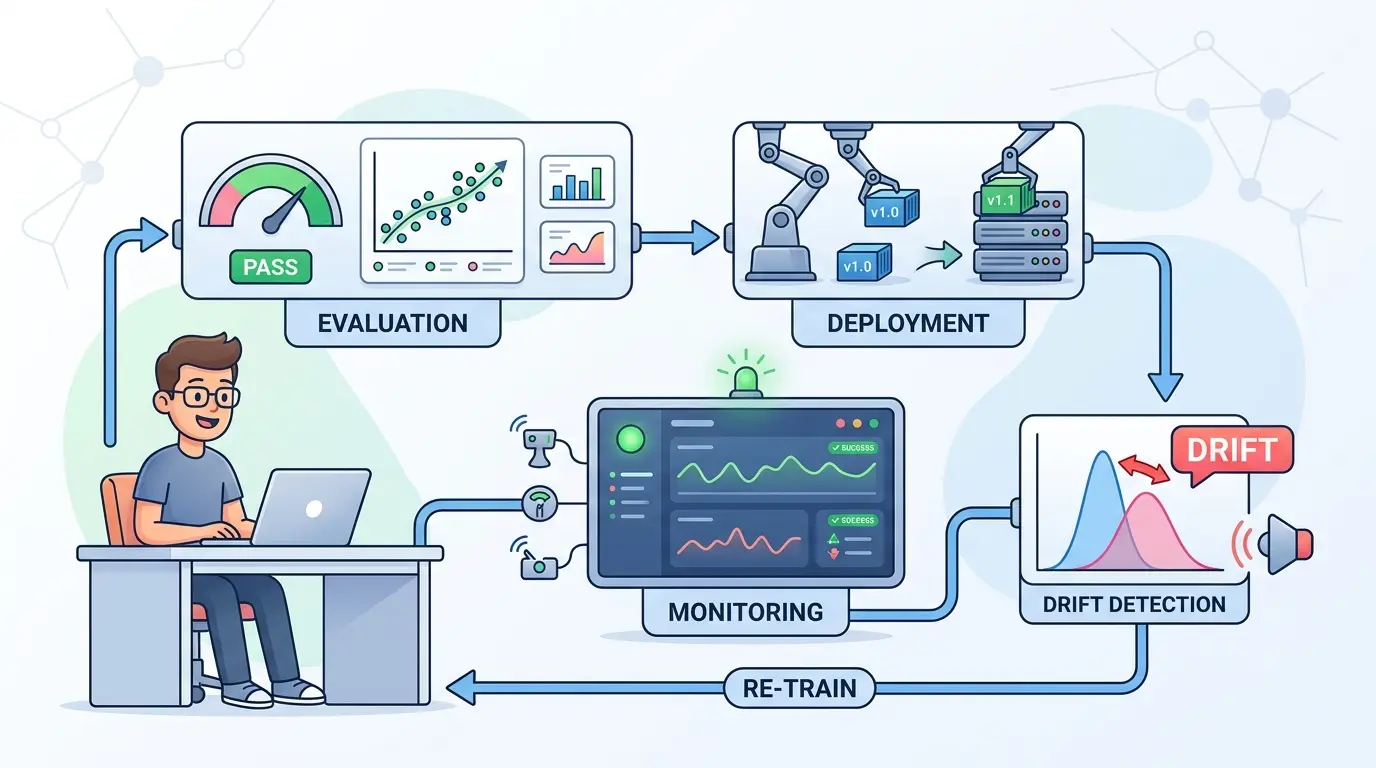

MLOps work rarely breaks because a model fails in an obvious way. The real bottlenecks usually show up earlier and cost more: weak evaluation criteria, rollout plans without hard stop conditions, dashboards full of symptoms instead of root causes, and drift alerts that do not tell you whether to retrain, recalibrate, or do nothing.

That is where strong AI prompts become useful. ChatGPT, Gemini, Claude, and DeepSeek can all help MLOps engineers move faster, but they do not help in exactly the same way. The prompts below are optimized as a universal foundation for evaluation design, deployment reviews, monitoring triage, and drift analysis. ChatGPT works well for versatile day-to-day operational drafting, Claude is often the better fit for structured reasoning and release-ready writing, Gemini is useful when multiple dashboards, logs, and documents need to be synthesized, and DeepSeek is strong when the task is logic-heavy and procedural.

These are not generic brainstorming prompts. They are operating prompts designed to plug into real MLOps loops, where evidence matters, uncertainty must be explicit, and every recommendation should connect back to a release gate, rollback plan, or monitoring action.

Turn Vague Success Criteria Into a Release Rubric

Model Recommendation: Claude

Act as a senior MLOps evaluation architect.

I will give you:

- the model use case

- the target users or downstream systems

- sample inputs and outputs

- business constraints

- known failure risks

- operational constraints such as latency, cost, and compliance

Your task is to build a release evaluation rubric with these sections:

1. Primary job to be done

2. Hard deployment blockers

3. Quality metrics to score

4. Risk-weighted failure taxonomy

5. Annotation guide for reviewers

6. Pass, review, and fail thresholds

7. Example scorecard template

8. Questions that must be answered before production approval

Separate model quality issues from business risk issues.

Mark which metrics are release-blocking and which are monitor-only.

If important information is missing, ask only the minimum clarifying questions needed.

The Payoff: This prompt forces model evaluation to become a decision system instead of a vague opinion exercise. It is especially useful when multiple teams are reviewing the same model from different angles and need one release language.

Generate a Harder Evaluation Set Before Production

Model Recommendation: DeepSeek

Act as an MLOps engineer building a high-value pre-production evaluation set.

Using the task description, current dataset summary, known incidents, and deployment risks I provide, generate a structured test matrix with these categories:

- happy path

- boundary cases

- malformed inputs

- distribution tail cases

- adversarial or abuse cases

- long-context or multi-step cases

- multilingual or formatting variance cases

- stale or conflicting data cases

For each proposed test case, include:

1. Scenario name

2. Why it matters operationally

3. Expected model behavior

4. Failure signal to watch for

5. Severity if it fails

6. Whether it should become a permanent regression test

Avoid duplicating easy happy-path coverage.

Bias toward cases that are expensive to miss in production.

The Payoff: Most production surprises come from under-tested edge conditions, not average cases. This prompt gives you a sharper evaluation set before traffic or user trust becomes the test harness.

If you are running large batch evaluations, it also helps to estimate the cost of prompt-heavy scoring and judge passes with the AI Token Calculator.

Compare Candidate Models Against Operational Risk

Model Recommendation: Claude

Act as a model release reviewer for an MLOps team.

I will provide results for multiple candidate models, including:

- quality scores

- failure counts by category

- latency

- throughput

- infrastructure fit

- cost characteristics

- safety or compliance observations

Create a release comparison memo with:

1. Executive summary

2. Side-by-side score table

3. Weighted tradeoff analysis

4. Operational risks by model

5. Cases where the best-quality model is not the best deployment choice

6. Final recommendation with rationale

7. What evidence would change the decision

Do not reward average score alone.

Treat severe failure modes, rollback complexity, and observability gaps as first-class decision factors.

The Payoff: This prompt is useful when the best lab result is not automatically the safest production choice. It helps convert scattered benchmark notes into a release memo that engineering, platform, and product teams can actually use.

Plan a Canary Rollout With Explicit Stop Conditions

Model Recommendation: DeepSeek

Act as a production rollout planner for an MLOps service.

Based on the deployment context I provide, create a staged rollout plan that includes:

- rollout phases by traffic percentage or tenant group

- pre-rollout checks

- guardrail metrics to monitor in each phase

- abort thresholds

- rollback triggers

- fallback behavior

- ownership by team or role

- communication checkpoints

- post-rollout validation steps

Assume the system may fail in ways that are partially silent.

Include both technical metrics and business-signal metrics.

Highlight where manual approval is smarter than full automation.

List the assumptions behind the rollout plan.

The Payoff: A good canary plan is not just a traffic schedule. It is an evidence-driven sequence with clear stop conditions, which is exactly what this prompt is designed to produce.

Convert Logs and Metrics Into a Monitoring Triage Report

Model Recommendation: Gemini

Act as an incident triage analyst for an MLOps platform.

I will provide some combination of:

- alert text

- logs

- traces

- latency charts

- error-rate charts

- recent deployment history

- affected endpoints or tenants

Produce a triage report with these sections:

1. Observed facts only

2. Likely blast radius

3. Timeline of known events

4. Top hypotheses ranked by evidence

5. What evidence supports each hypothesis

6. What evidence is still missing

7. Highest-leverage next checks

8. Instrumentation gaps that make diagnosis harder

Do not collapse facts and interpretation into one list.

Be explicit about uncertainty.

The Payoff: This prompt is strong when alerts are noisy and the team needs a fast evidence summary before a call turns into guesswork. It works especially well if your observability practice already borrows ideas from Full-Stack AI Observability: Tracing Agentic Loops with OpenTelemetry & Arize.

Investigate Whether an Alert Is Noise, Regressed Code, or Infrastructure

Model Recommendation: DeepSeek

Act as a root-cause analyst for a production MLOps alert.

Using the evidence I provide, classify the incident into one or more of these buckets:

- model behavior regression

- feature or data pipeline issue

- feature skew or schema mismatch

- infrastructure bottleneck

- bad rollout sequencing

- observability or instrumentation defect

- normal traffic mix change

Output:

1. Most likely classification

2. Alternative classifications still plausible

3. Evidence for and against each one

4. Fastest next diagnostic step

5. Common false-positive interpretations

6. Recommended investigation order for the next 30 minutes

Do not assume the first anomaly is the root cause.

Favor the smallest test that can disconfirm a bad hypothesis.

The Payoff: This is useful when the team is losing time on the wrong branch of the investigation tree. It gives you a disciplined way to separate noisy symptoms from the most likely control point.

Separate Data Drift, Concept Drift, and Instrumentation Drift

Model Recommendation: DeepSeek

Act as an MLOps drift analyst.

I will provide baseline and current distributions, feature summaries, label lag details, business context, upstream pipeline changes, and monitoring notes.

Decide whether the evidence points to:

- data drift

- concept drift

- label delay or feedback lag

- instrumentation drift

- seasonal or expected business change

- a mixed event involving more than one factor

For your answer, include:

1. Classification

2. Evidence supporting it

3. Evidence that would weaken the conclusion

4. False-positive traps

5. What should be checked next

6. Whether retraining, recalibration, or no action is most justified right now

Do not recommend retraining by default.

Quantify uncertainty wherever possible.

The Payoff: Drift alerts are only useful if they change action. This prompt helps prevent teams from treating every distribution shift as a retraining event when the real issue might be upstream instrumentation, seasonal behavior, or delayed labels.

Decide Whether to Retrain, Recalibrate, or Roll Back

Model Recommendation: Claude

Act as a change-review advisor for an MLOps platform.

Given evidence from evaluation, deployment monitoring, incident analysis, and drift checks, recommend the best next action from this set:

- no action

- threshold adjustment

- calibration update

- prompt or policy change

- feature pipeline fix

- retraining

- rollback

For each option, provide:

1. Expected benefit

2. Operational risk

3. Data or evidence still needed

4. Validation plan

5. Reversibility

6. Recommended owner

End with:

- the single best next move

- why it is preferable now

- the smallest safe follow-up step

The Payoff: This prompt is valuable when several responses are technically possible but only one is operationally justified. It helps change-review meetings focus on evidence, reversibility, and validation instead of instinct.

Write a Clean Incident Update for Stakeholders

Model Recommendation: ChatGPT

Act as a technical communications partner for an MLOps incident.

Using the facts I provide, write two stakeholder updates:

1. a short executive-safe version

2. a more detailed version for product, support, and engineering partners

Requirements:

- preserve technical accuracy

- state what is known

- state what is not yet known

- describe customer or business impact in plain language

- avoid speculation

- include the next checkpoint or decision point

- keep blame out of the message

If my draft is unclear, rewrite it for clarity without changing the facts.

The Payoff: MLOps problems are often made worse by muddy communication. This prompt helps you keep stakeholder updates crisp while the investigation stays grounded in verified facts.

Pro-Tip: Chain the Prompts Like an MLOps Runbook

Do not use these prompts as isolated chats. Start with the evaluation rubric, feed its failure taxonomy into the harder test-set prompt, push those results into the candidate comparison, then use the rollout, monitoring, and drift prompts as the model reaches production. That style of prompt chaining is much closer to Prompt Engineering 3.0: The End of Prompting and the Rise of Flow Engineering than one-off prompting.

Keep every output as a reusable artifact. The next prompt becomes better when it consumes a real rubric, a real incident summary, or a real drift table instead of relying on memory.

The long-term advantage is not faster chatting. It is better operational judgment: tighter release criteria, cleaner rollout gates, sharper monitoring triage, and more disciplined drift response. Used this way, AI prompts help MLOps engineers spend less time sorting noise and more time making reliable production decisions.