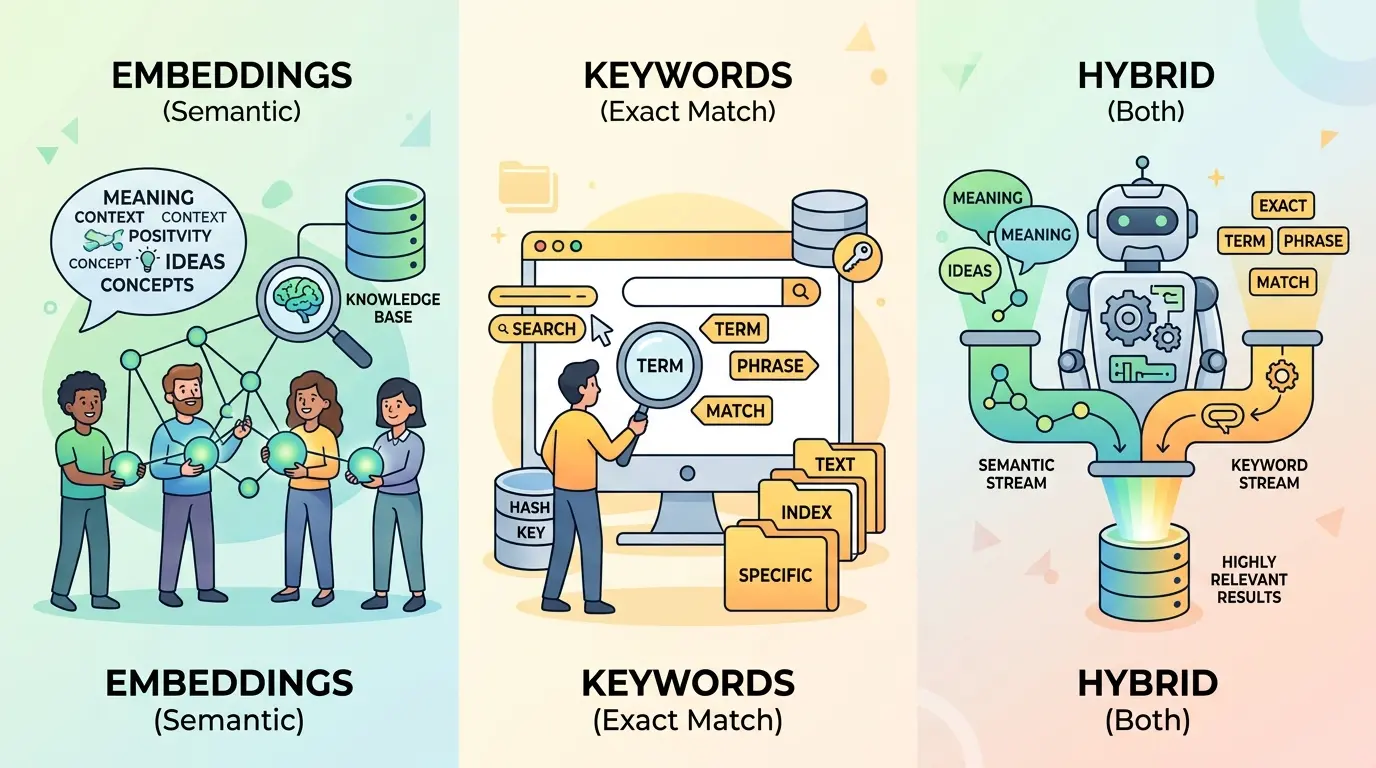

Most retrieval systems do not fail because the model is weak. They fail because teams pick one retrieval method too early, index the corpus once, and then hope every query behaves the same way. That breaks fast in support search, internal knowledge bases, enterprise RAG, and product documentation because user intent is mixed: some questions need exact terms, some need semantic similarity, and some need both.

ChatGPT, Gemini, Claude, and DeepSeek can all help with this work, but they are useful in slightly different ways. The prompts below are optimized as a universal foundation for search engineers, RAG builders, analytics teams, and AI product owners who need to decide when to use keywords, embeddings, or hybrid search. ChatGPT works well for day-to-day iteration, Claude is often the better fit for careful reasoning and structured tradeoffs, Gemini is useful when you need broader input synthesis, and DeepSeek is strong at technical decomposition and ranking logic.

If your team is still deciding whether retrieval belongs in the architecture at all, The Context Window Trap: When to Choose RAG vs. Long-Context Models for Business Data is a useful companion before you start tuning the search layer.

Audit Query Intent Before You Pick a Retrieval Method

Model Recommendation: DeepSeek is often the better fit for decomposing query types, failure costs, and routing logic.

Act as a retrieval systems architect.

I am designing search or RAG for this use case:

[DESCRIBE PRODUCT, USERS, CORPUS, AND FAILURE COST]

Here are representative user queries:

[PASTE 20 TO 50 REAL QUERIES]

Here are representative document types:

[PASTE DOC TYPES, FIELD STRUCTURE, AND SAMPLE SNIPPETS]

For each query cluster, classify whether success depends mostly on:

1. exact term matching

2. semantic similarity

3. metadata filtering

4. temporal freshness

5. hybrid retrieval

Then produce:

- a query taxonomy

- the likely failure mode for each taxonomy group

- the best primary retrieval strategy for each group

- when keyword search should override embeddings

- when embeddings should override keyword search

- when hybrid search is justified

- which signals should be logged for future evaluation

Do not give generic advice. Base the answer on the actual query and document patterns.

The Payoff: This prompt stops teams from arguing in the abstract. It forces the retrieval decision to follow query shape, document structure, and business risk instead of vendor marketing or personal preference.

Build a Keyword-First Retrieval Plan for Exact-Match Queries

Model Recommendation: ChatGPT works well for practical daily prompt tasks like designing lexical search rules, synonym sets, and fallback behavior.

You are helping me design a keyword-first retrieval system.

Use case:

[DESCRIBE SEARCH PRODUCT]

Corpus fields:

[LIST TITLE, BODY, TAGS, IDs, SKU FIELDS, POLICY CODES, ERROR CODES, METADATA]

Critical exact-match concepts:

[LIST IDENTIFIERS, FIELD NAMES, ACRONYMS, LEGAL TERMS, PRODUCT NAMES, COMMANDS]

Design a keyword retrieval plan that includes:

- which fields should receive the highest boosts

- where phrase matching matters most

- where fuzzy matching is safe and where it is dangerous

- how to handle acronyms, aliases, and product synonyms

- stop-word and stemming cautions

- metadata filters that should run before ranking

- fallback logic when no exact match is found

- 15 evaluation queries that would prove the system is working

Return the result as:

1. ranking logic

2. query rewrite rules

3. synonym policy

4. failure risks

5. evaluation checklist

The Payoff: Keyword search is still the right answer when queries depend on identifiers, codes, field names, contract language, or compliance-sensitive phrasing. This prompt helps you preserve that precision instead of accidentally smoothing it away with vector similarity.

Design an Embedding-First Strategy for Semantic Recall

Model Recommendation: Claude is often the better fit when you need careful reasoning about semantic drift, paraphrase behavior, and chunk boundaries.

Act as a semantic retrieval specialist.

I need an embedding-first retrieval design for this corpus:

[DESCRIBE CORPUS, DOC LENGTH, LANGUAGE STYLE, AND USER INTENT]

Representative queries:

[PASTE QUERIES THAT USE PARAPHRASES, NATURAL LANGUAGE, OR INDIRECT WORDING]

Representative documents:

[PASTE SAMPLE CHUNKS OR DOC EXCERPTS]

Design an embedding-based retrieval strategy that covers:

- which content should be embedded and which should stay metadata-only

- ideal chunking strategy by document type

- parent-child or section-level retrieval recommendations

- how to preserve headings, tables, and citations

- candidate retrieval depth before reranking

- thresholding or filtering guidance

- common false positive patterns to watch for

- when semantic search will outperform keyword search

- when semantic search should not be trusted alone

Finish with a short decision rule for when embeddings are the primary retrieval engine.

The Payoff: Embeddings usually help when users describe the right concept with the wrong vocabulary. They are strong for paraphrase-heavy, natural-language, and cross-document discovery, but only if chunking, metadata, and reranking are handled with discipline.

When the discussion shifts from retrieval logic to vector infrastructure choices, Vector Database Showdown: Pinecone vs. Milvus vs. Weaviate for Enterprise RAG is a practical next read.

Combine Both in a Hybrid Ranking Pipeline

Model Recommendation: DeepSeek is useful when the task requires score fusion, routing logic, and a clean breakdown of ranking stages.

You are designing a production hybrid search system.

Inputs:

- corpus description: [DESCRIBE CORPUS]

- user query patterns: [PASTE QUERY TYPES]

- metadata structure: [LIST FILTERS, FRESHNESS SIGNALS, ACCESS CONTROLS]

- latency budget: [STATE TARGET]

- precision vs recall priority: [STATE BUSINESS GOAL]

Design a hybrid retrieval pipeline that includes:

- keyword retrieval stage

- embedding retrieval stage

- metadata filtering order

- score normalization method

- whether to use weighted fusion, reciprocal rank fusion, or another strategy

- reranking stage and what it should evaluate

- routing rules for exact-match queries vs semantic queries

- logging fields for offline evaluation

- conditions where hybrid search is worth the added complexity

- conditions where hybrid search is unnecessary overhead

Return the answer as an architecture plan with stage-by-stage rationale.

The Payoff: Hybrid search is often the safest production baseline when traffic mixes exact-match intent and semantic intent. This prompt helps you avoid a shallow “just combine both” answer and instead build a real ranking pipeline with explicit tradeoffs.

Tune Chunking and Metadata Before Blaming Retrieval

Model Recommendation: Gemini works well when you want to synthesize multiple document examples, schema details, and indexing constraints into one chunking plan.

Act as a retrieval index design reviewer.

I suspect my retrieval quality problem is caused by chunking or metadata design.

Here are my document types:

[LIST DOC TYPES]

Here are sample documents or excerpts:

[PASTE SEVERAL EXAMPLES]

Here are the metadata fields available:

[LIST FIELDS]

Here are failed queries and bad retrieval results:

[PASTE FAILURES]

Propose:

- chunking strategy by document type

- optimal chunk size range and overlap policy

- when to keep parent-child relationships

- which metadata fields should be indexed for filtering vs ranking vs display

- how to handle tables, lists, code blocks, and repeated headers

- how to preserve enough context for answer grounding without bloating retrieval

- a short validation checklist for reindexing safely

Make the answer specific to the examples I provided.

The Payoff: Many retrieval failures are actually index design failures. If chunk boundaries destroy meaning or metadata is too thin, neither keyword search nor embeddings will look as good as they should.

If you want to inspect that problem visually before reindexing, the RAG Chunking Visualizer is a useful tool for making chunk boundaries and context loss easier to see.

Create an Evaluation Set That Can Falsify Your Assumptions

Model Recommendation: Claude is often the better fit for building a clean relevance rubric and turning messy query examples into a defensible evaluation framework.

Help me build an offline retrieval evaluation set.

System goal:

[DESCRIBE WHAT GOOD RETRIEVAL LOOKS LIKE]

Query samples:

[PASTE REAL QUERIES]

Available documents or document IDs:

[PASTE INDEX OR SAMPLE DOC MAP]

I need an evaluation framework that compares:

- keyword retrieval

- embedding retrieval

- hybrid retrieval

Please create:

- query categories

- a graded relevance rubric

- expected positive documents for each sample query

- metrics to track, including recall@k and ranking quality

- failure tags for lexical miss, semantic miss, metadata miss, chunking issue, reranker issue, and freshness issue

- a comparison template that shows which strategy wins by query class rather than only by overall average

- a recommendation for minimum sample size before trusting the result

Do not let the system hide bad exact-match behavior behind global averages.

The Payoff: Retrieval decisions go wrong when teams optimize for a single blended metric. This prompt makes the evaluation granular enough to reveal where keywords, embeddings, and hybrid search each truly win.

Run Failure Analysis and Add Routing Rules

Model Recommendation: ChatGPT works well for operational debugging passes where you need quick iteration across many failed queries and remediation ideas.

Act as a retrieval incident analyst.

I will give you failed search or RAG cases.

For each case, diagnose the root cause and prescribe the smallest fix that is likely to work.

Use this taxonomy:

- exact term mismatch

- synonym or alias gap

- semantic false positive

- chunking failure

- metadata filter issue

- stale or missing document

- reranker failure

- access control problem

- multilingual mismatch

- ambiguous query that needs routing

For each failure, return:

1. root cause

2. whether keyword, embedding, or hybrid retrieval should have handled it

3. recommended fix

4. whether the fix belongs in indexing, query rewriting, fusion, reranking, or UX

5. whether a routing rule should be added

6. one metric or log signal to monitor after the fix

Here are the failures:

[PASTE FAILED QUERIES, TOP RESULTS, EXPECTED RESULTS, AND ANY USER FEEDBACK]

The Payoff: This turns retrieval tuning into an operating process instead of a one-time architecture choice. Over time, the routing rules you add here are what make a search system feel reliable.

Pro-Tip

Chain these prompts in order instead of using them as isolated one-offs. Start with the query intent audit, move into strategy design, then build the evaluation set, and only after that run failure analysis. The more real queries, judged results, and document samples you provide, the more useful the model comparison becomes.

The best retrieval strategy is rarely a universal winner. Keywords win when exact language matters. Embeddings win when users describe ideas indirectly. Hybrid search is usually the strongest operational default when your traffic contains both, but only if you evaluate it by query class and tune the index, fusion, and routing layers with evidence instead of instinct.