Most AI agents do not break because the model is unintelligent. They break because the workflow is loose. A tool gets called without enough context. A stale memory gets treated as fact. A bad answer ships because nobody defined what “good” looks like before launch.

Whether you build with ChatGPT, Gemini, Claude, or DeepSeek, the reliability problem looks similar. The model is only one part of the system. The AI Prompts below are optimized as a universal foundation for AI engineers, product teams, and automation builders who need agents that behave consistently under pressure. Each model has different strengths, but the prompts below work best when treated as part of a repeatable operating framework rather than a bag of one-off tricks.

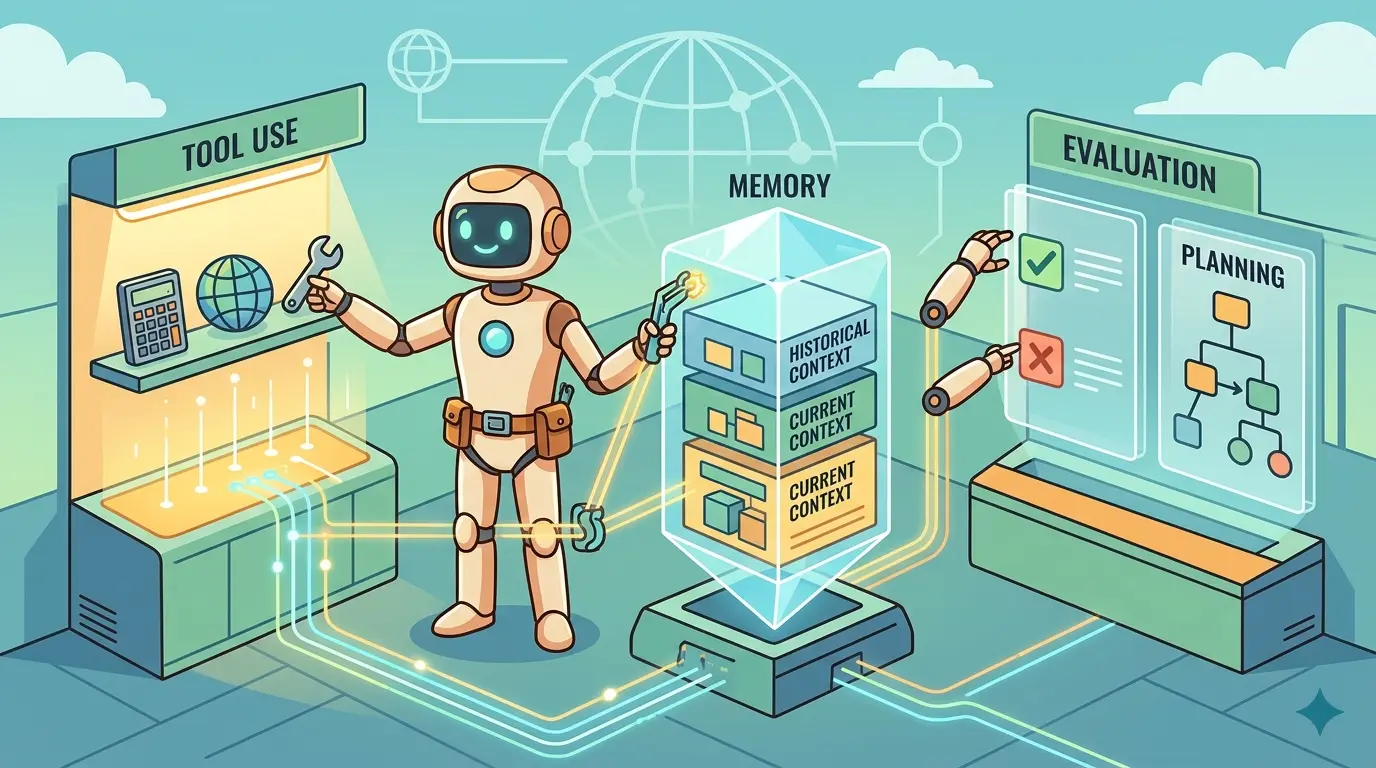

The Three Reliability Layers

A reliable agent usually stands on three layers:

- Tool Use: the agent knows when to call a tool, when to stop, what to verify, and when to ask the user instead of guessing.

- Memory: the agent stores durable information selectively, tracks confidence, and avoids turning every transient detail into permanent truth.

- Evaluation: the team can score outputs, replay failures, and catch regressions before users do.

That is why agent reliability is closer to workflow engineering than clever prompting. If your team is already moving from single-turn prompting toward multi-step orchestration, Prompt Engineering 3.0: The End of Prompting and the Rise of Flow Engineering is useful background. The shift matters because reliable agents are defined by how steps connect, not just how any single instruction is worded.

Prompt 1: Turn Your Tool List Into An Execution Policy

Model Recommendation: Claude is often the better fit for policy writing, structured rules, and careful boundary setting.

You are designing the execution policy for an AI agent.

I will give you the agent goal and the available tools.

Your job is to convert the tool list into an operating policy the agent can follow reliably.

Return:

1. Tool name

2. Primary purpose

3. Preconditions before the tool can be used

4. Inputs that must be collected first

5. Situations where the agent must ask the user for clarification

6. Situations where the tool should not be used

7. How the agent should verify the tool result

8. What fallback action to take if the tool fails or returns ambiguous data

9. Risk level: low / medium / high

Also create:

- a short "tool selection checklist"

- a short "stop and ask the user" checklist

- a short "do not chain these tools blindly" warning list

Agent goal:

[PASTE AGENT PURPOSE]

Available tools:

[PASTE TOOL NAMES, INPUTS, OUTPUTS, AND ACTION TYPES]

The Payoff: Most unreliable agents do not have a model problem first. They have a decision-policy problem. This prompt forces the tool layer to become explicit, which is the fastest way to reduce random tool calls and fragile chaining.

Prompt 2: Force Clarification Before Irreversible Actions

Model Recommendation: ChatGPT works well for fast day-to-day operational prompts where the goal is to make the agent pause, classify missing inputs, and ask better questions.

You are the clarification gate for an AI agent.

The agent is about to perform a task that may involve external actions, data changes, or irreversible decisions.

Review the request and decide whether the agent should:

- continue immediately

- ask clarifying questions

- refuse

- escalate to human review

Return:

1. Decision: continue / clarify / refuse / escalate

2. Missing information that blocks safe execution

3. Why each missing item matters

4. The minimum clarifying questions to ask

5. Safe assumptions the agent may make

6. Assumptions the agent must not make

7. If continuing, the exact next step only

Task request:

[PASTE USER REQUEST]

Action types involved:

[PASTE POSSIBLE ACTIONS SUCH AS SEND, DELETE, EDIT, PURCHASE, EXECUTE, PUBLISH]

The Payoff: Reliable agents do not impress people by acting fast. They earn trust by knowing when speed is the wrong optimization. A clarification gate removes a huge class of failures caused by silent assumptions.

If the agent reads emails, documents, tickets, or web pages before acting, hostile instructions belong in the same reliability model. What Is Prompt Injection? The Complete Guide to AI Prompts for Detection, Prevention, and Testing covers that trust-boundary problem in more detail.

Prompt 3: Design Memory As A Typed System, Not A Transcript

Model Recommendation: Gemini is useful when memory design depends on synthesizing product docs, workflow notes, and agent responsibilities into one schema.

You are a memory architect for an AI agent.

Design a memory system for the agent described below.

The memory system must separate:

- user profile facts

- stable preferences

- long-term project facts

- temporary task state

- ephemeral observations

- unsafe or unverified claims that should not be stored

For each memory type, return:

1. Memory class name

2. What belongs in it

3. What must never be stored in it

4. Source requirements

5. Confidence level rules

6. Expiration or review policy

7. Retrieval priority

8. Conflict resolution rule

9. Example entry

Also define:

- which memory classes are writable automatically

- which memory classes require confirmation

- which memory classes should be session-only

Agent description:

[PASTE AGENT PURPOSE, USERS, TOOLS, AND TASK TYPES]

The Payoff: A typed memory design turns memory from a vague feature into an operating system. Instead of storing raw conversation residue, the agent learns what deserves to persist and what should expire with the task.

Prompt 4: Audit Memory Writes Before They Become Truth

Model Recommendation: Claude is often a strong fit when the agent needs to reason carefully about confidence, conflicts, and whether a claimed fact is durable enough to store.

You are reviewing candidate memory writes for an AI agent.

Your job is to decide what should be written to memory, what should be rejected, and what should be stored only temporarily.

For each candidate item, return:

1. Keep / reject / session-only / needs confirmation

2. Memory class

3. Why it belongs there

4. Confidence level

5. Expiration or review policy

6. Whether it conflicts with existing memory

7. The safer rewritten memory entry

8. What evidence is missing

Existing memory:

[PASTE CURRENT MEMORY]

Candidate items from the latest interaction or tool output:

[PASTE FACTS, TOOL RESULTS, USER STATEMENTS, AND SUMMARIES]

The Payoff: Unreliable memory is usually not missing memory. It is bad memory. This prompt blocks the common failure mode where a one-off statement, noisy tool result, or stale assumption gets fossilized into future behavior.

Prompt 5: Build An Evaluation Rubric Before You Ship

Model Recommendation: DeepSeek is often the better fit for structured analysis, scoring criteria, and technical decomposition across multiple failure modes.

You are building an evaluation rubric for an AI agent.

I will give you the agent goal, the user tasks, the tools, and the allowed outputs.

Create an evaluation framework that measures:

- task success

- factual accuracy

- tool-use correctness

- memory correctness

- clarification behavior

- safety and refusal behavior

- formatting or workflow compliance

Return:

1. Evaluation dimensions

2. Pass/fail criteria for each dimension

3. Weighted score for each dimension

4. Examples of acceptable behavior

5. Examples of unacceptable behavior

6. Signals that require human review

7. A compact scoring sheet reviewers can reuse

8. A list of "looks good but is actually wrong" failure patterns

Agent description:

[PASTE AGENT PURPOSE]

Representative tasks:

[PASTE TASK SET]

The Payoff: Evaluation is what separates an agent demo from an agent system. Once the rubric exists, quality stops being a vibe and becomes something your team can review, challenge, and improve.

Prompt 6: Turn Real Failures Into Regression Tests

Model Recommendation: DeepSeek works well when you need to transform messy incidents into clearly labeled test cases and reusable failure matrices.

You are turning agent failures into a regression suite.

I will give you failed conversations, bad tool traces, memory mistakes, and reviewer notes.

Create a regression pack.

For each test case, return:

1. Test ID

2. Scenario summary

3. Input or conversation setup

4. Tool context if needed

5. Expected safe behavior

6. Expected failure to avoid

7. Which evaluation dimension this tests

8. Severity if it regresses

9. Tags for grouping similar failures

Then create:

- a minimal smoke-test subset

- a high-risk subset

- a memory-specific subset

- a tool-use-specific subset

Failure data:

[PASTE FAILURES, LOGS, AND REVIEW NOTES]

The Payoff: Reliable agents improve through evidence, not optimism. A regression pack built from real failures lets the team stop rediscovering the same defects in new clothes.

This gets even more useful once every failure can be traced back to the exact model turn, tool output, and decision point. Full-Stack AI Observability: Tracing Agentic Loops with OpenTelemetry & Arize is useful background if you want evaluation tied to runtime evidence instead of anecdote.

Prompt 7: Run A Root-Cause Postmortem On Agent Breakdowns

Model Recommendation: Gemini is often the better fit when you need to synthesize transcripts, tool logs, memory state, and reviewer notes into one coherent diagnosis.

You are performing a root-cause postmortem for an AI agent failure.

Use the evidence to determine:

- what the agent was trying to do

- which step failed first

- whether the failure was caused by tool selection, tool interpretation, memory design, memory write policy, missing clarification, or evaluation gaps

- what the user impact was

- what the smallest durable fix is

Return:

1. Incident summary

2. First failure point

3. Failure category

4. Why the agent made the wrong move

5. Evidence that supports the diagnosis

6. Fast mitigation

7. Durable system fix

8. New regression test to add

9. Prompt, policy, or architecture change needed next

Evidence:

[PASTE CONVERSATION, TOOL TRACE, MEMORY STATE, EVALUATION SCORE, AND REVIEW NOTES]

The Payoff: Postmortems prevent vague lessons like “the model got confused.” Instead, the team learns whether the true problem was a missing guardrail, an unsafe memory write, a bad tool interpretation, or an evaluation blind spot.

A Working Reliability Loop

A practical loop for most agent teams looks like this:

- Write the tool policy before the agent can call anything expensive, destructive, or user-visible.

- Add a clarification gate so incomplete requests do not turn into confident errors.

- Design typed memory with confidence, scope, and expiration rules.

- Review memory writes before the agent turns noise into durable truth.

- Create the evaluation rubric before launch so quality is measurable.

- Convert failures into regression tests so the system gets stricter over time.

- Run postmortems from evidence so fixes address the first real failure point.

This sequence is not glamorous, but it is what keeps an agent steady when tasks get messy. The same discipline that makes an agent more reliable also makes it easier to audit, debug, and hand off between teams.

Pro-Tip: Separate Decision, Action, And Memory Steps

Do not let one prompt decide the plan, call the tool, interpret the tool result, write memory, and self-grade the outcome. Chain those jobs. Use one prompt to decide whether action is allowed, a second to interpret tool output, a third to decide whether anything deserves memory, and a fourth to score the result against your rubric. Claude is often stronger for policy wording, Gemini for wide-context synthesis, DeepSeek for structured evaluation design, and ChatGPT for quick operational gating.

Reliable agents are built the same way reliable teams are built: with clear responsibilities, durable records, and routines for learning from failure. Better prompts help, but better boundaries are what make that help hold up under real work.