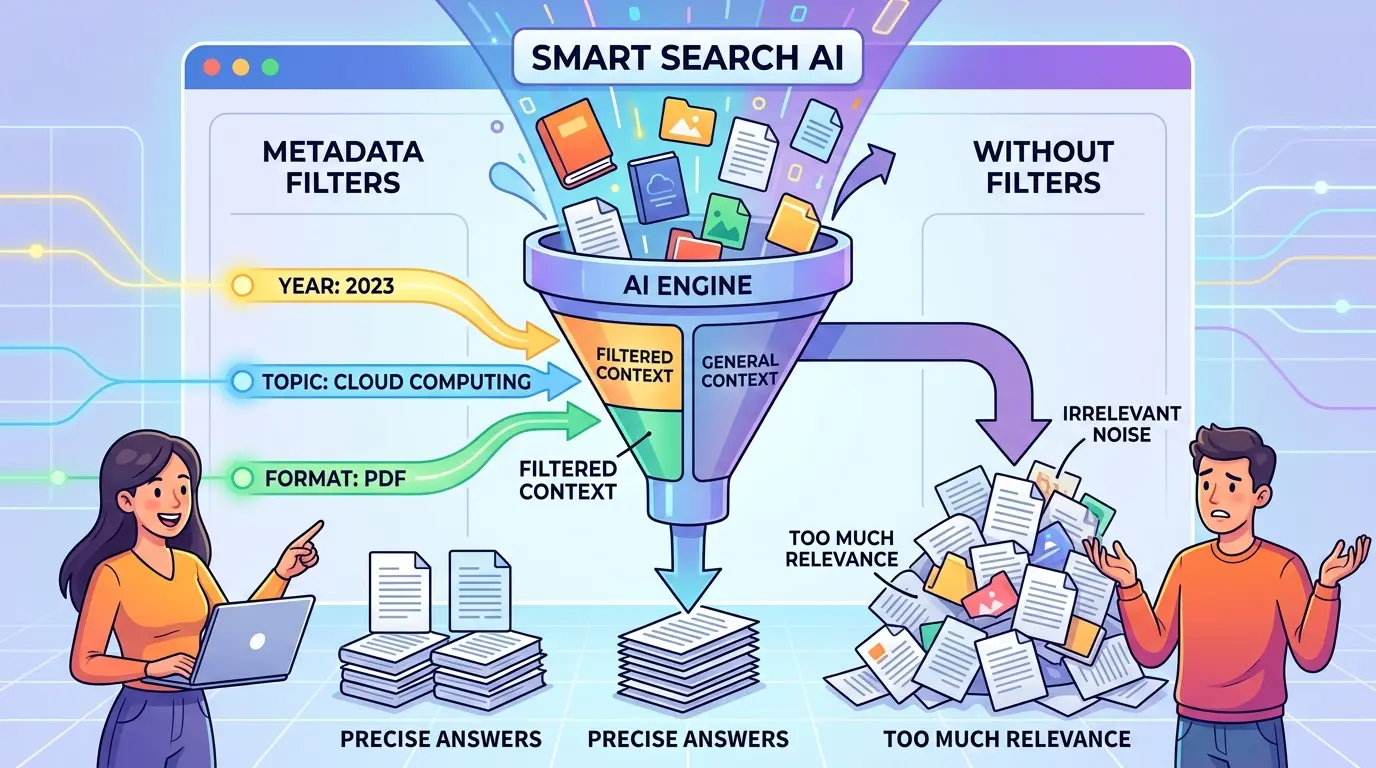

The failure mode is usually quiet. A RAG system returns documents that look semantically related, yet the answer is wrong because the retrieval step ignored the one constraint that actually mattered: tenant, jurisdiction, time range, product line, document type, or access scope. In other cases, the opposite happens: metadata filters become so aggressive that the system misses the best evidence entirely.

That is why metadata filtering in RAG needs more than a default checkbox in your vector database. ChatGPT, Gemini, Claude, and DeepSeek can all help you reason through this layer. The prompts below are optimized as a universal foundation for AI engineers and RAG builders, while still acknowledging that each model has different strengths. Used well, metadata filtering sharpens retrieval. Used blindly, it reduces recall, hides ranking problems, and creates brittle query behavior.

Before changing your filter logic, it helps to separate three questions: Which fields are true hard constraints? Which fields are weak hints? Which fields are too noisy to trust at retrieval time? If you are still deciding whether retrieval constraints should live in RAG at all, The Context Window Trap: When to Choose RAG vs. Long-Context Models for Business Data is a useful companion for that architecture decision.

Audit Which Metadata Fields Actually Improve Retrieval

Model Recommendation: DeepSeek is often the better fit for structured decomposition when you need to classify fields into hard constraints, soft ranking signals, and low-trust attributes.

You are helping me audit metadata fields in a retrieval-augmented generation system.

Context:

- Domain: [describe the dataset and users]

- Current metadata fields: [list fields]

- Typical query types: [list 5-10 representative queries]

- Current retrieval method: [keyword, dense, hybrid, reranker, etc.]

- Current failure patterns: [wrong tenant, stale docs, empty results, over-filtering, etc.]

Task:

1. Classify each metadata field into one of these buckets:

- Hard filter constraint

- Soft ranking signal

- Better embedded in document text

- Too noisy or incomplete for retrieval control

2. For each field, explain why.

3. Identify which fields should never be optional.

4. Identify which fields should be applied only for certain query intents.

5. Return the result as a table with columns:

field | recommended role | reason | risk if misused | example query impact

Do not give generic advice. Base the reasoning on the failure patterns and query types provided.

The Payoff: This prompt stops teams from treating every metadata column as equally trustworthy. It helps you distinguish security and scope filters from attributes that should influence ranking or chunk design instead.

Detect Where Over-Filtering Is Killing Recall

Model Recommendation: Claude works well for careful reasoning when you need to inspect edge cases and explain why relevant evidence disappears.

Act as a retrieval diagnostics reviewer for a RAG system.

I will provide:

- User query

- Intended answer scope

- Filter expression used

- Top retrieved results

- Relevant result that was missed, if known

For each case:

1. Decide whether the metadata filter was appropriate, too broad, or too narrow.

2. Explain exactly how the filter reduced recall, if it did.

3. Identify whether the missed result failed because of:

- Missing metadata

- Incorrect metadata values

- Overly strict filter logic

- Query intent mismatch

- Bad chunking or document segmentation

4. Suggest the safest correction.

5. Label the correction as one of these:

- Relax the filter

- Make the filter conditional

- Move the field into reranking

- Fix metadata generation

- Redesign chunking

Return the output as a concise incident review for each query.

The Payoff: Over-filtering often looks like a ranking issue until you inspect the actual exclusion logic. This prompt gives you a disciplined way to tell whether the bug lives in metadata quality, filter syntax, or retrieval design.

If the problem turns out to be poor chunk boundaries rather than poor filters, a tool like the RAG Chunking Visualizer can make that obvious quickly. Metadata filters cannot rescue chunks that mix multiple entities, time periods, or topics into the same embedding unit.

Design a Filter Strategy by Query Intent

Model Recommendation: ChatGPT is a strong day-to-day choice when you want to turn recurring query patterns into an operational ruleset your team can actually use.

Help me design a query-intent-aware metadata filtering policy for a RAG application.

Inputs:

- Application domain: [describe]

- Users: [internal staff, customers, analysts, legal team, etc.]

- Metadata fields available: [list]

- Common query categories: [factual lookup, policy question, troubleshooting, comparison, historical audit, etc.]

- Known risks: [privacy leakage, stale answers, empty retrieval, bad recall, latency]

Task:

1. Map each query category to the right metadata behavior:

- Required filters

- Optional filters

- No filters

- Rerank-only signals

2. Explain why that policy fits each query type.

3. Show what should happen when metadata is missing or low confidence.

4. Propose a fallback order if strict filtering returns too few results.

5. Output a decision matrix and a short implementation policy that an engineer can hand to the retrieval layer owner.

Favor robust behavior over perfect precision.

The Payoff: Metadata filtering should change with query intent, not just data availability. A policy lookup query and an exploratory troubleshooting query rarely deserve the same filter strictness.

Compare Pre-Filter, Post-Filter, and Rerank Approaches

Model Recommendation: Gemini is useful when you need to synthesize multiple experiment notes, logs, and evaluation outputs into a single recommendation.

I am comparing three retrieval strategies in a RAG system:

1. Pre-filter before vector search

2. Retrieve first, then post-filter

3. Retrieve broadly, then rerank with metadata-aware scoring

I will provide:

- Query set

- Relevance judgments or expected sources

- Latency notes

- Empty-result cases

- False-positive cases

- Infrastructure constraints

Task:

1. Compare the three strategies for precision, recall, latency, and operational risk.

2. Identify which query classes benefit from each strategy.

3. Flag where pre-filtering creates brittle behavior.

4. Flag where post-filtering wastes retrieval budget.

5. Flag where reranking is the better compromise.

6. Recommend a hybrid strategy, not a one-size-fits-all answer.

7. Return the result as:

- executive summary

- query-class recommendation table

- rollout plan

The Payoff: This prompt prevents architecture debates from collapsing into slogans. It forces a trade-off review across latency, recall, cost, and empty-result risk instead of treating filter timing as a purely technical preference.

Platform behavior also matters here. Some vector systems push filters down efficiently, while others degrade sharply under certain combinations of cardinality and hybrid search. Vector Database Showdown: Pinecone vs. Milvus vs. Weaviate for Enterprise RAG is worth reading when the storage engine itself may be shaping the trade-off.

Decide Whether Metadata Belongs in Filters, Embeddings, or Prompts

Model Recommendation: Claude is often the better fit for nuanced placement decisions where the wrong layer creates hidden coupling.

Act as a RAG architecture reviewer.

For each attribute below, decide whether it should primarily live in:

- retrieval filters

- document text or chunk text

- embedding representation

- reranker features

- answer-generation prompt context

Attributes to review:

[list attributes such as region, product family, user role, jurisdiction, issue severity, publication date, doc status]

For each attribute:

1. Recommend the primary layer.

2. List any secondary layer that may still use it.

3. Explain the failure mode if it is placed in the wrong layer.

4. Give one example query where the correct placement matters.

Finish with a summary of which attributes should be treated as hard scope controls versus semantic relevance signals.

The Payoff: Many RAG failures happen because teams push a concept into the wrong layer. Access scope usually belongs in filters. Topical nuance often belongs in text, embeddings, or reranking. Mixing those roles creates unstable retrieval behavior.

Debug Empty Results and False Negatives Fast

Model Recommendation: DeepSeek works well when you need a strict diagnostic checklist that narrows failure causes quickly.

You are debugging a RAG retrieval pipeline that returns empty results or misses the best evidence.

I will provide:

- Query

- Applied metadata filters

- Candidate document that should have matched

- Candidate document metadata

- Retrieval logs or simplified trace

Task:

1. Walk through the failure in this exact order:

- Was the document excluded by filter logic?

- Was the metadata missing, stale, or mis-normalized?

- Did chunking split the relevant evidence incorrectly?

- Did ranking bury the right result after retrieval?

- Did the query intent require a broader first-pass search?

2. Produce a root-cause verdict.

3. Suggest one immediate fix and one longer-term system fix.

4. Mark the incident severity based on user impact and recurrence risk.

Return a concise debugging report with no filler.

The Payoff: This is the kind of prompt you use when production incidents start piling up. It gives you a repeatable path from symptom to root cause instead of letting the team guess between indexing, ranking, metadata, and chunking.

Build an Evaluation Set for Metadata Filter Regressions

Model Recommendation: Gemini is useful when you want to turn many documents, support tickets, and failure examples into a structured evaluation plan.

Help me create an evaluation set for metadata filtering in a RAG system.

Inputs:

- Domain and user types: [describe]

- Available metadata fields: [list]

- Known risk categories: [privacy leak, stale answer, wrong tenant, empty retrieval, wrong jurisdiction, etc.]

- Historical bad examples: [paste cases]

Task:

1. Create a test set of query scenarios that specifically stress metadata behavior.

2. Include positive and negative cases for each major field.

3. Include cases where filters should be strict, relaxed, conditional, or absent.

4. For each test case, define:

- query

- intended scope

- metadata expectation

- minimum acceptable retrieval behavior

- likely regression signature

5. Group the cases by failure mode so the set can be used in CI or offline evaluation.

Make this rigorous enough for retrieval policy changes, not just demo testing.

The Payoff: Metadata changes break systems quietly unless you test for them directly. This prompt helps you build a regression harness around scope leakage, stale evidence, and recall collapse before those failures hit users.

Pro-Tip: Chain Diagnosis Before Policy Changes

Start with the field audit, then run the over-filtering review, then use the query-intent policy prompt. That sequence keeps you from “fixing” recall by removing a field that was actually protecting access scope, or from tightening a filter that should have been a reranking feature instead. If cost is part of the debate, the AI Token Calculator can help quantify whether broader retrieval is actually expensive enough to justify stricter filtering.

The strongest RAG systems treat metadata as a precision tool, not a universal shortcut. Over time, the teams that improve fastest are the ones that evaluate filters by query intent, field trust, and failure mode instead of by instinct.