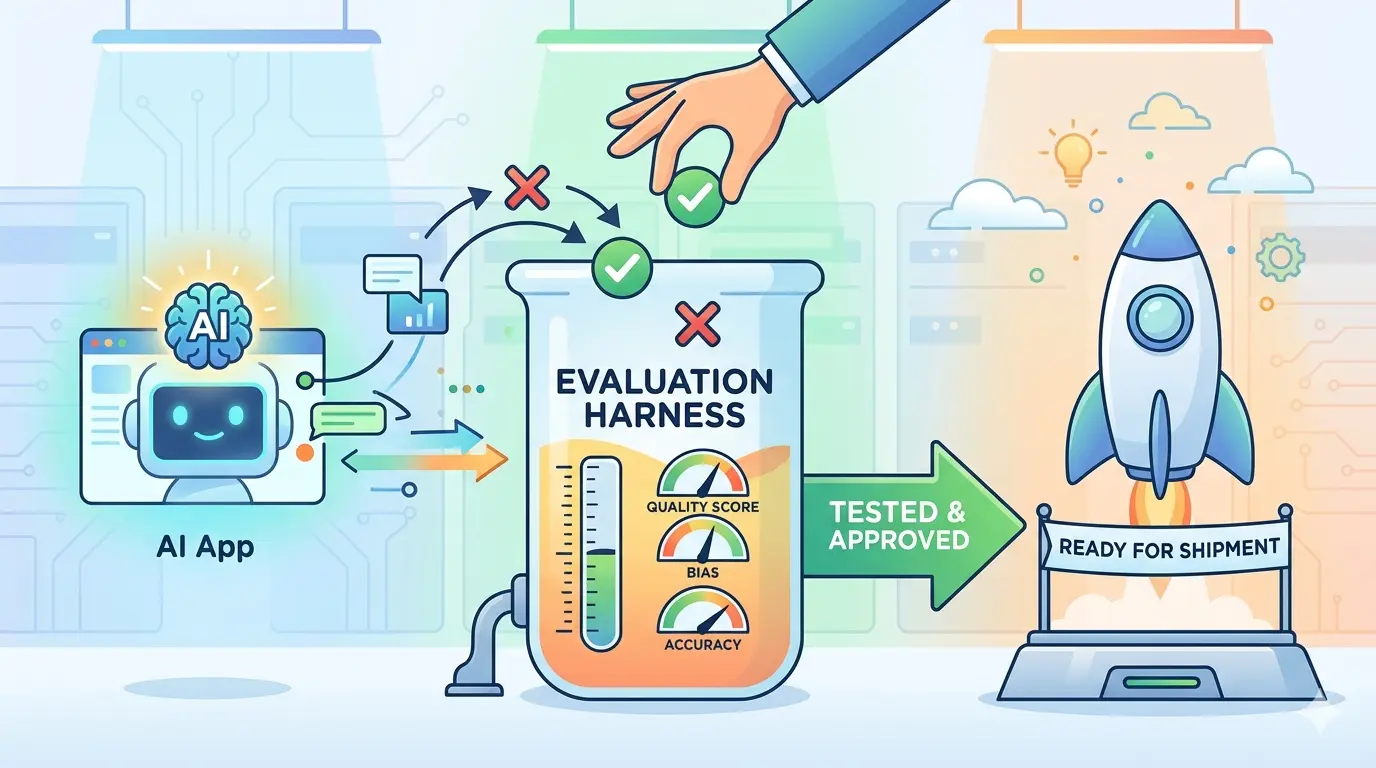

Teams hit the same bottleneck over and over: an AI feature looks sharp in a demo, answers a few happy-path examples, and still breaks once real users, messy context, tool calls, and policy constraints show up together. An evaluation harness is the missing layer. It is a repeatable system for running representative test cases through your AI workflow, scoring the outputs, comparing changes, and surfacing regressions before users find them first.

That matters whether you are working with ChatGPT, Gemini, Claude, or DeepSeek. All four can support serious evaluation work, but they tend to shine in different places. The prompts below are optimized as a universal foundation for AI engineers, product teams, and applied researchers who need a reusable evaluation workflow for real AI apps, not just one-off benchmark screenshots.

Define The Real Job First

Model Recommendation: Claude is often the better fit when you need careful reasoning about product requirements, failure definitions, and evaluation scope.

You are an AI evaluation architect.

I am building an evaluation harness for this AI app:

[describe the app, target user, task, tools, data sources, and business outcome]

Do not start with generic benchmark advice.

Start by defining what success means in the real workflow.

Return:

1. The primary user job to be done

2. The operational constraints that matter in production

3. The top failure modes that would damage trust, safety, or usability

4. The evaluation dimensions I should measure

5. The minimum release bar for each dimension

6. A short list of test scenarios that must exist in the harness before launch

Keep the answer concrete and product-specific.

The Payoff: Most teams start evaluating too late because they never define what the system is actually supposed to do under pressure. This prompt gives the harness a real contract instead of a vague goal like “be helpful” or “sound accurate.”

A strong harness also keeps reliability separate from hype. If your team is still treating quality as prompt wording alone, the broader system view in What Makes an AI Agent Reliable? AI Prompts for Tool Use, Memory, and Evaluation is a useful companion.

Turn Real Usage Into Eval Cases

Model Recommendation: Gemini works well for multi-document synthesis when you need to combine support tickets, transcripts, human reviews, and product notes into a usable test set.

You are building evaluation cases from real production evidence.

I will provide some combination of:

- support tickets

- conversation transcripts

- human-reviewed outputs

- QA notes

- escalation records

- prompt or tool traces

Convert this material into reusable evaluation cases.

For each case, return:

1. Case ID

2. User intent

3. Hidden context the model would need

4. Expected useful behavior

5. Common failure pattern

6. Severity if the model fails

7. Tags for grouping similar cases

Then organize the output into:

- a balanced core eval set

- a hard-case eval set

- a release-blocker eval set

Remove redundant noise, but preserve the real production difficulty.

The Payoff: Synthetic evals are easy to pass because they are usually too clean. This prompt turns actual user friction into structured coverage, which is what makes the harness reflect the job your app is really doing.

Generate Edge Cases The Happy Path Hides

Model Recommendation: DeepSeek is useful when you need structured decomposition of brittle logic, edge conditions, and compound failure scenarios.

You are generating edge-case tests for an AI application.

Base workflow:

[describe the normal task]

Create 20 high-value stress cases that challenge the system with variations such as:

- vague or underspecified requests

- contradictory follow-up instructions

- missing required context

- noisy retrieved documents

- tool failure or timeout

- malformed structured inputs

- risky or policy-sensitive user requests

- prompt injection attempts

- long-context distraction

- user frustration or urgency

For each stress case, return:

1. Scenario name

2. Test input

3. Why weak systems fail here

4. What a production-quality response should do

5. A pass/fail checklist

6. Whether the case belongs in smoke tests, full regression, or release-blocker review

Make the scenarios realistic for business workflows, not theatrical internet examples.

The Payoff: A harness is weak if it only confirms the system works when the user behaves perfectly. This prompt expands the suite into the conditions where confidence usually breaks.

Build A Scoring Rubric Humans Can Reuse

Model Recommendation: Claude is often the better fit for nuanced scoring criteria, judgment anchors, and reusable review rubrics.

You are designing a human evaluation rubric for an AI app.

The app does this:

[describe task]

Create a scoring rubric for these dimensions:

- correctness

- completeness

- groundedness

- format reliability

- policy compliance

- tool-use quality

- user usefulness

For each dimension, provide:

1. A plain-English definition

2. A 1 to 5 scoring scale with clear anchors

3. What counts as an automatic fail

4. What evidence a reviewer should look for

5. Common reviewer mistakes to avoid

Then add:

- one overall pass/fail rule

- one release-blocker rule

- one example of a borderline case

The Payoff: Without a stable rubric, reviewers drift into taste-based judgments. This prompt makes scoring repeatable so the harness produces decisions your team can defend.

Add Automatic Checks For Deterministic Failures

Model Recommendation: ChatGPT is a practical choice for fast iteration on structured-output checks, schema validation ideas, and day-to-day QA workflows.

You are a QA engineer building automatic checks for an AI app.

The app must satisfy these hard requirements:

[paste schema, output contract, citation rules, refusal rules, tool-call rules, or formatting constraints]

Design an automatic validation layer.

Return:

1. The deterministic checks that can be automated immediately

2. The inputs that should trigger each check

3. The exact failure condition for each rule

4. Which failures should block release

5. Which failures should trigger human review instead

6. A compact regression checklist for CI or pre-release testing

Assume the goal is to reduce human review load by catching obvious failures automatically.

The Payoff: Humans should spend time on ambiguity, judgment, and business quality, not on spotting broken JSON or missing citations. Automatic checks give the harness a fast, reliable floor.

Compare Prompts, Models, And System Changes Side By Side

Model Recommendation: ChatGPT works well for repeated comparison tasks where you need fast tables, practical tradeoff summaries, and clean regression notes.

You are comparing two or more AI system variants.

I will provide some combination of:

- prompt version A vs prompt version B

- model A vs model B

- retrieval strategy A vs retrieval strategy B

- tool policy A vs tool policy B

- previous output vs new output

Evaluate the differences against this task:

[describe task and success criteria]

Return a comparison table with these columns:

1. Variant

2. Strengths

3. Weaknesses

4. New failure risks introduced

5. Cases it improves

6. Cases it worsens

7. Whether the change should ship, be revised, or be rejected

Then write a concise regression summary for stakeholders.

The Payoff: Most AI iteration fails because teams compare changes by memory, not evidence. This prompt makes the harness useful for controlled A/B evaluation instead of anecdotal debate.

When experiments start expanding token spend, the AI Token Calculator is a practical way to estimate whether broader eval coverage is still cost-safe before you scale the suite.

Diagnose Failures Before You Retune Everything

Model Recommendation: DeepSeek is often the better fit when you need a structured root-cause breakdown across prompting, retrieval, tools, and post-processing.

You are diagnosing a failed AI system run.

I will provide:

- the user request

- the system or developer prompt

- retrieved context

- tool calls and outputs

- final response

- expected behavior

- failure complaint

Identify the most likely primary root cause.

Classify the failure into one main bucket:

- bad evaluation case design

- prompt scope failure

- retrieval failure

- reasoning failure

- tool selection failure

- tool output handling failure

- parser or schema failure

- policy handling failure

- missing fallback or escalation logic

Then return:

1. Evidence for the diagnosis

2. The smallest fix to test first

3. A regression case to add to the harness

4. What metric or trace should be watched after the fix

5. What not to change yet

The Payoff: Weak teams respond to a bad result by rewriting everything at once. This prompt keeps the harness tied to disciplined debugging, which is how quality actually improves instead of just moving around.

Set Release Gates And Ongoing Review Cadence

Model Recommendation: Claude works well for release policy, threshold setting, and balancing quality standards with operational realism.

You are defining release gates for an AI application's evaluation harness.

System context:

[describe the app, risk level, user type, and business stakes]

Current evaluation dimensions:

[paste dimensions, rubric summary, automatic checks, or test categories]

Design a release policy that includes:

1. Smoke-test requirements

2. Full-regression requirements

3. Human-review requirements

4. Automatic blocker conditions

5. Minimum acceptable scores by dimension

6. Rules for approving, holding, or rolling back a change

7. A weekly or per-release review cadence

8. A short escalation path when quality drops after launch

Make the policy strict enough to prevent avoidable regressions, but realistic enough for an operating product team.

The Payoff: A harness is not just a testing artifact. It becomes operational leverage once it tells the team what must pass, what can degrade temporarily, and when a release should stop.

That release discipline gets much stronger when failures are traced instead of guessed, which is where Full-Stack AI Observability: Tracing Agentic Loops with OpenTelemetry & Arize becomes a natural extension of the harness.

Pro-Tip

Pro-Tip: Run these prompts as a chain instead of using them in isolation. Start by defining the real job, turn real evidence into eval cases, add stress tests, build the rubric, automate deterministic checks, compare system changes, diagnose failures, and only then update release gates. The more context you provide about user segments, tool traces, policies, and expected outcomes, the less likely your harness is to reward outputs that merely look polished.

A good evaluation harness does not make an AI app perfect. It makes quality visible. Once your team can rerun representative cases, score them with consistent rules, and explain failures with evidence, shipping decisions stop being guesswork and start becoming engineering.